The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

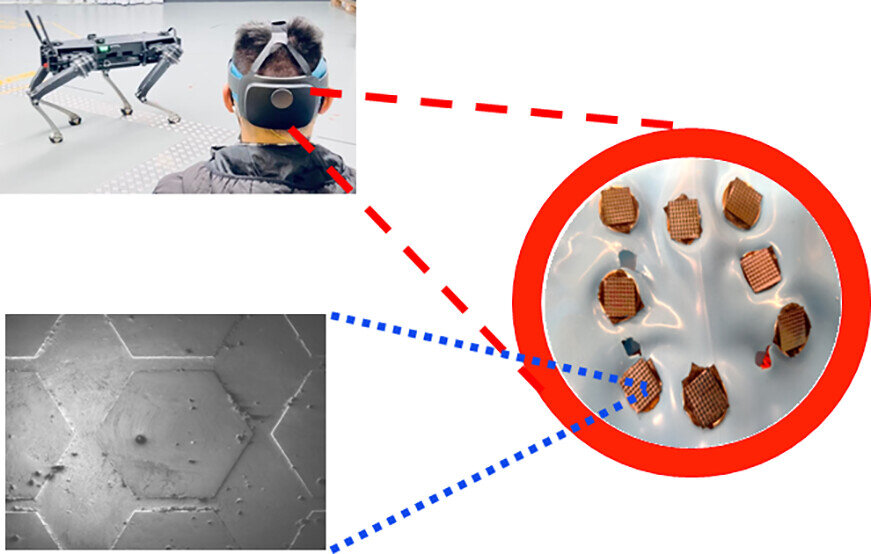

The Australian Army has demonstrated and trialed AI-powered robot dogs controlled by soldiers' brain signals via brain-computer interfaces and augmented reality headsets. While no harm has occurred, the technology's military application and potential weaponization present credible risks of future harm or misuse.[AI generated]