The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

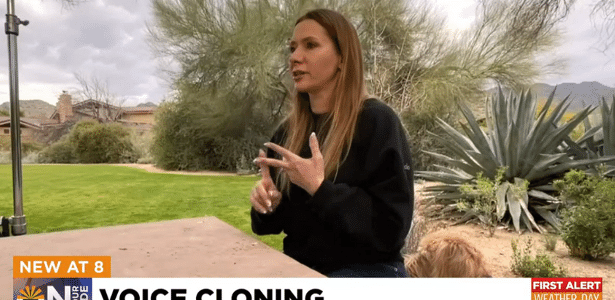

Scammers used AI voice cloning technology to convincingly imitate an Arizona mother's 15-year-old daughter, faking a kidnapping and demanding ransom. The realistic AI-generated voice caused severe emotional distress and nearly led to financial harm, highlighting the risks of AI misuse in extortion schemes.[AI generated]

:max_bytes(150000):strip_icc():focal(749x0:751x2)/cell-phone-hoax2022092376-87c9781e6816456b9d5a684cc8ff8bc0.jpg)

:quality(75)/cloudfront-us-east-1.images.arcpublishing.com/elcomercio/CG62EBV5LJAYBJ7BRG5TDESXNM.jpg)