The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

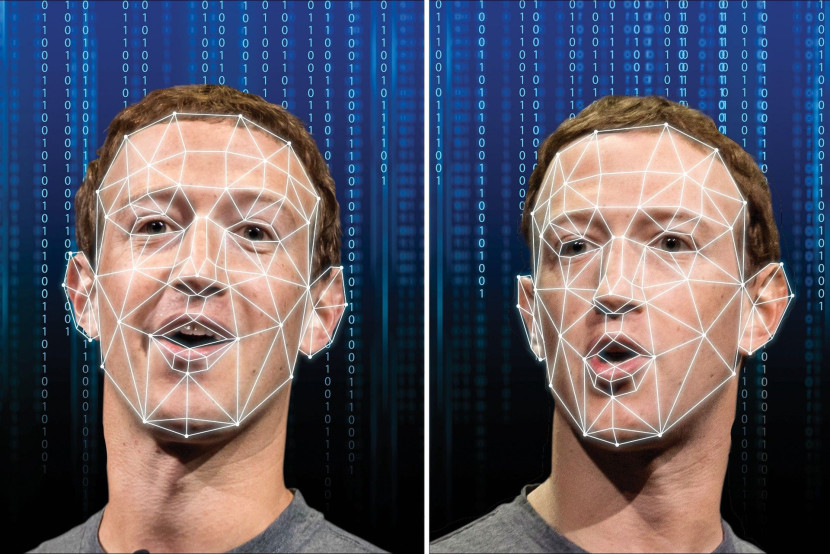

AI-powered deepfake technology is increasingly used in Indonesia for financial fraud, extortion via pornographic content, political manipulation, and spreading misinformation. Demand for deepfake services on darknet forums is high, leading to significant harm to individuals’ finances, privacy, and reputations, and threatening democratic processes.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves AI systems (deepfake generation technology) being used maliciously to create harmful content that directly causes harm to individuals' privacy, reputation, and finances. The article reports that these harms are occurring, with active demand and supply of such services on the darknet. This meets the criteria for an AI Incident because the AI system's use has directly led to significant harms including financial loss and violations of privacy and rights. The involvement is through malicious use of AI-generated deepfakes, causing realized harm.[AI generated]

:strip_icc():format(jpeg)/kly-media-production/medias/3523419/original/018415000_1627445880-Luke_Skywalker_deepfake.jpg)