The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

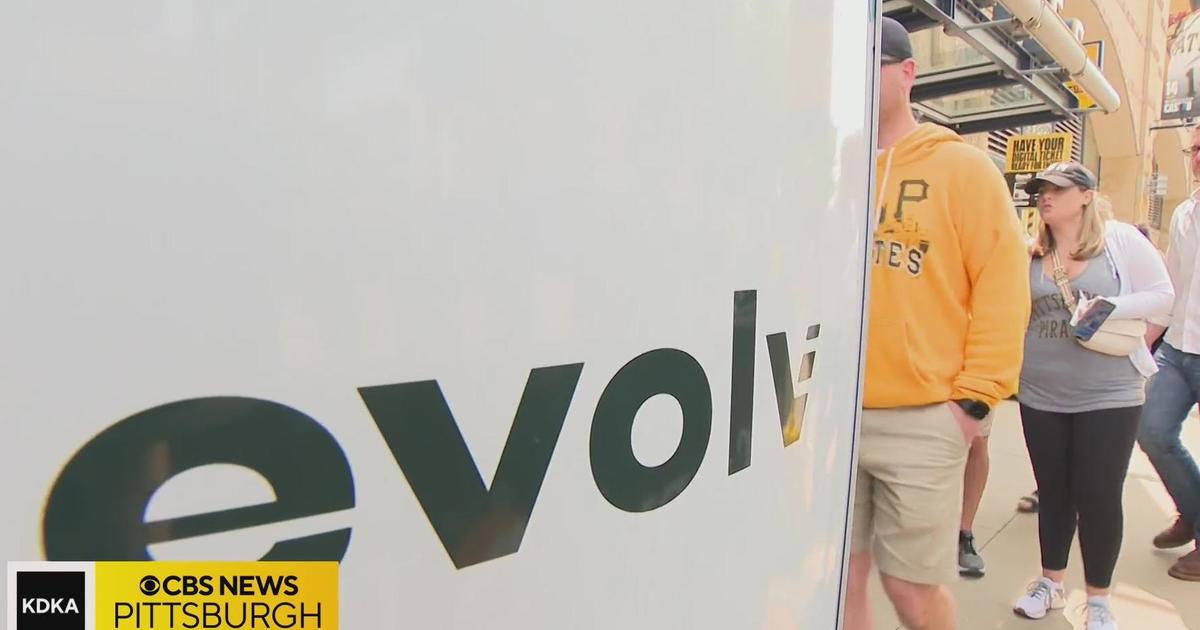

Evolv Technology's AI-powered weapons scanner, deployed in a New York school for nearly $4 million, failed to detect a large knife, resulting in a student being stabbed. Investigations revealed the system frequently misses knives, raising concerns about its effectiveness and the safety risks of relying on such AI security solutions.[AI generated]