The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

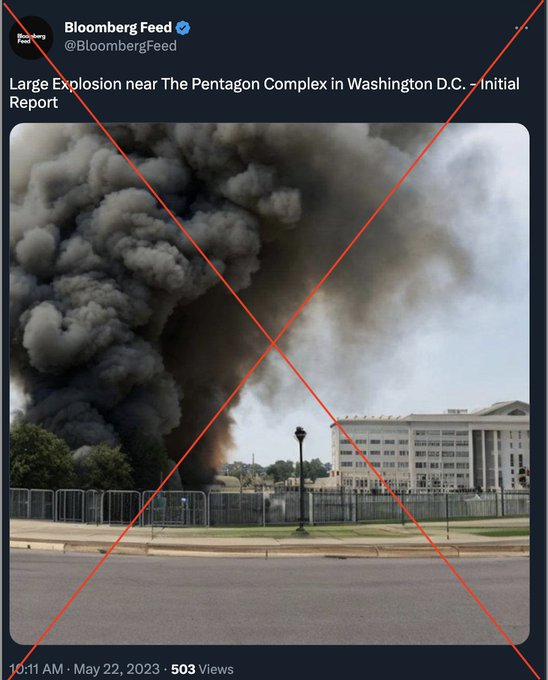

An AI-generated fake image depicting an explosion near the Pentagon was spread by a fake verified Bloomberg Twitter account, leading major news outlets to report it as real. The misinformation caused panic, resulting in a significant drop in the S&P 500 and millions of dollars in financial losses before the hoax was debunked.[AI generated]

/data/photo/2023/05/23/646c906ec1eae.jpg)

:strip_icc()/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2023/C/V/OQiOBlREyc5X1nECBBVQ/398574829.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/3909443/original/069282100_1642661994-jeremy-bezanger-Jm1YUfYjpHI-unsplash.jpg)