The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

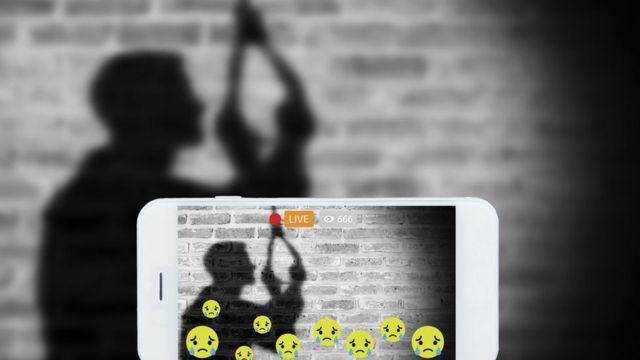

Meta and its subcontractor Sama face lawsuits from over 180 former Facebook content moderators in Kenya, who allege severe mental health harm from reviewing violent and hateful content flagged by AI systems. The legal actions highlight inadequate support and poor working conditions linked to AI-assisted moderation.[AI generated]