The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

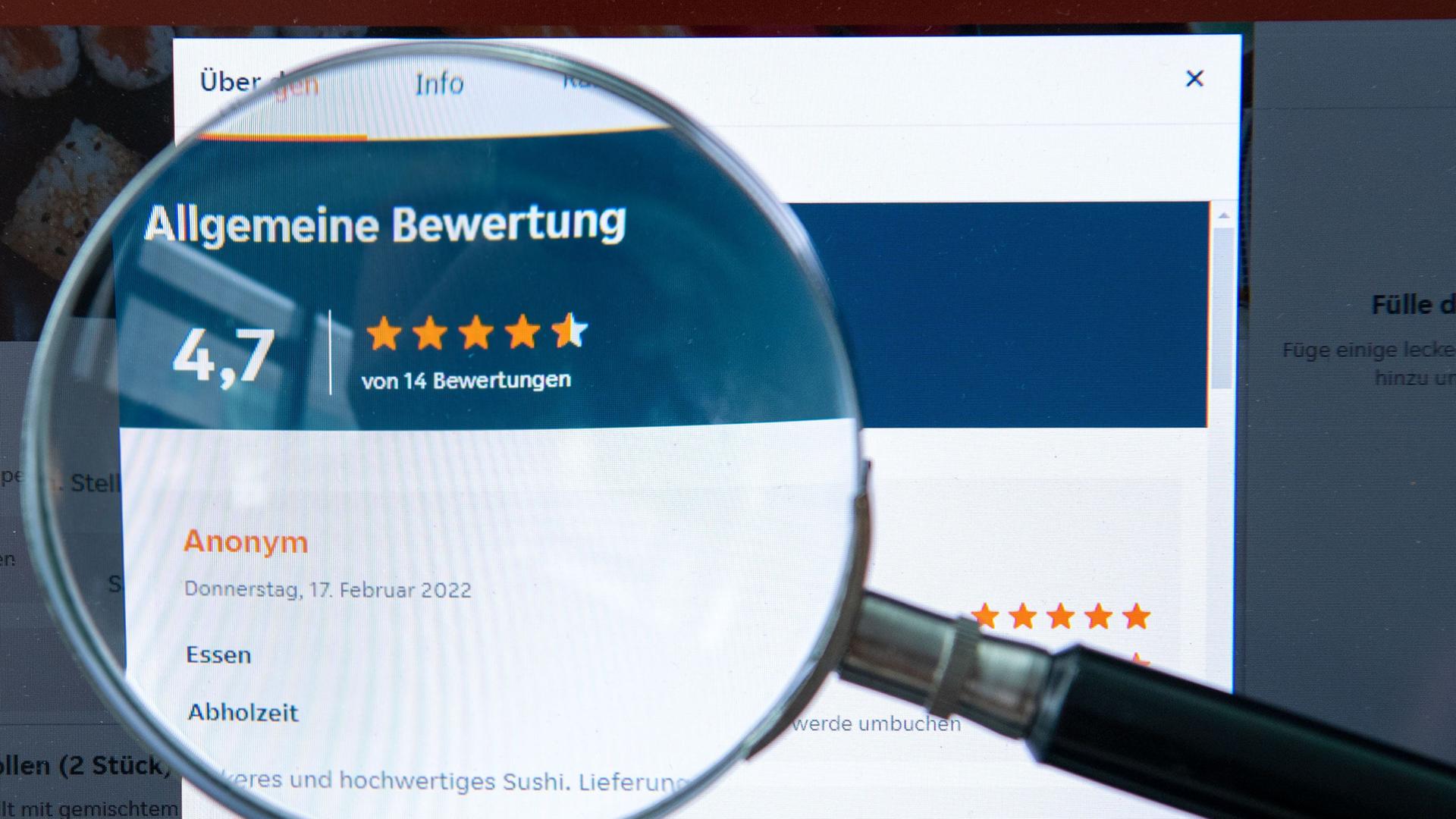

Generative AI systems like ChatGPT are increasingly used to create fake online reviews and profiles, making them nearly indistinguishable from genuine ones. This misuse has already led to consumer deception and unfair competition, with platforms like Amazon taking legal action against providers of AI-generated fake reviews.[AI generated]