The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

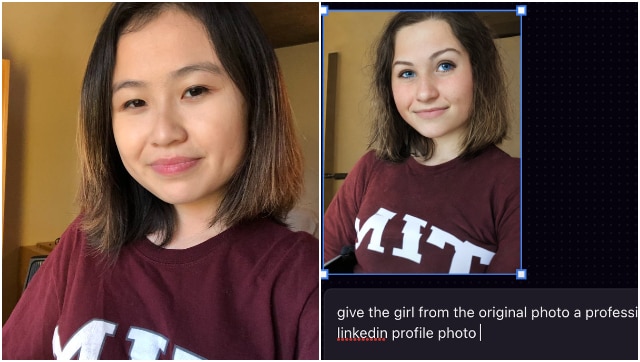

Rona Wang, an Asian MIT student, used Playground AI to create a professional headshot, but the AI altered her appearance to make her look white, with lighter skin and blue eyes. The incident highlights persistent racial bias in AI image generators, sparking public concern over discrimination and misrepresentation.[AI generated]