The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

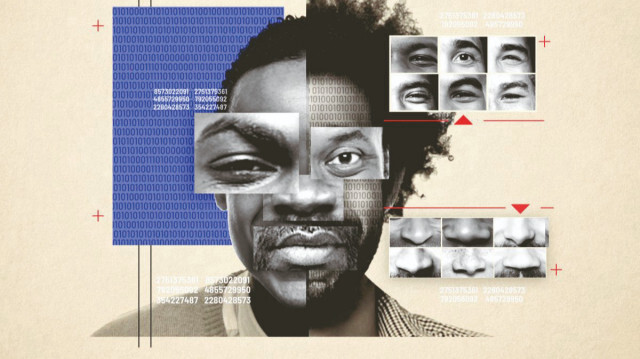

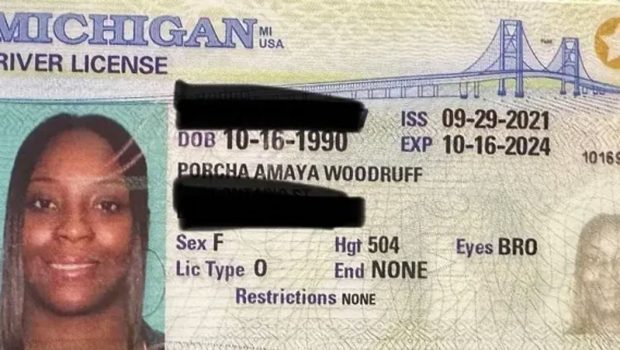

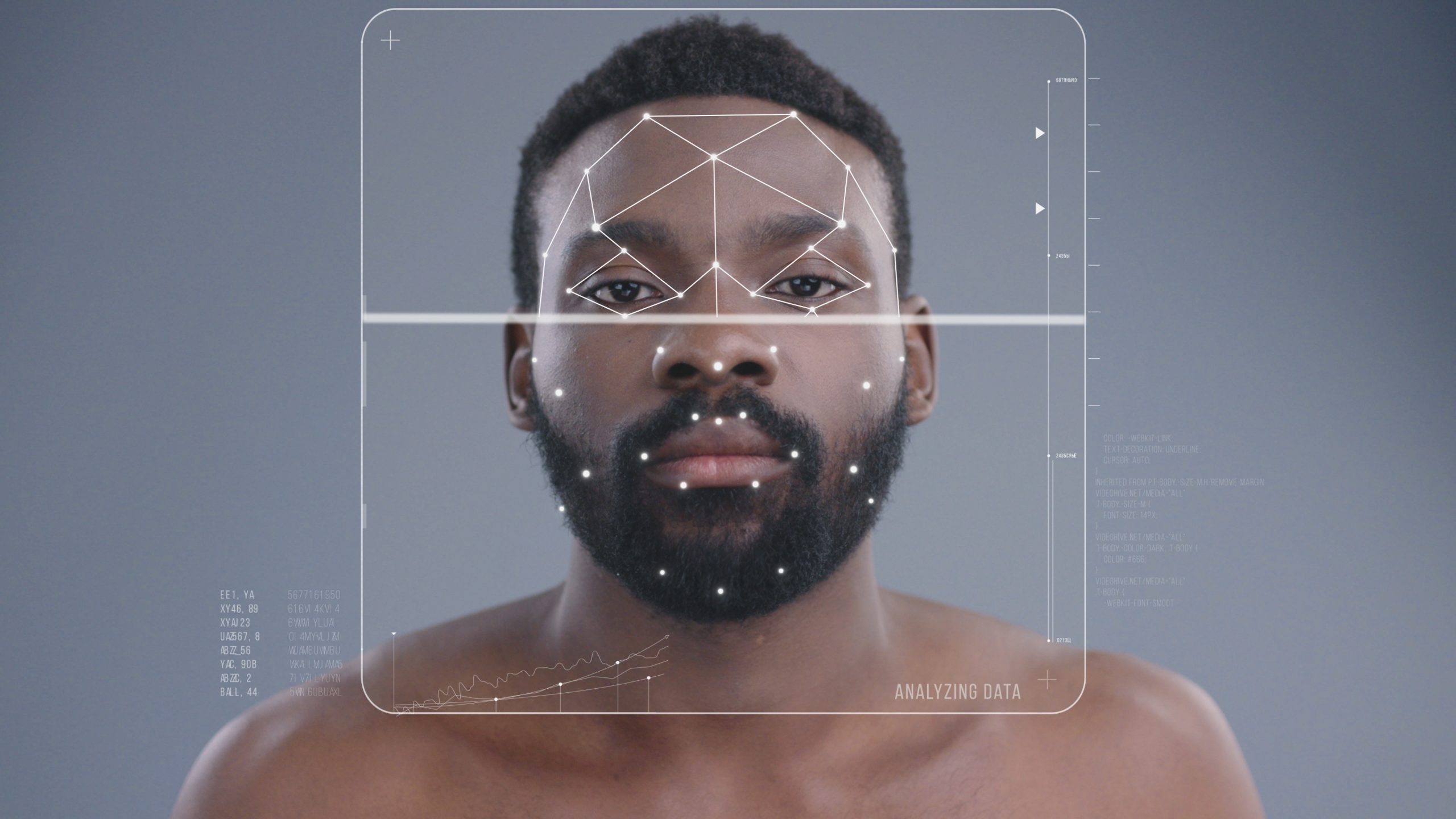

Porcha Woodruff, a Black woman eight months pregnant, was wrongfully arrested by Detroit police for carjacking after facial recognition AI misidentified her. The incident caused her physical and emotional harm, highlighting the technology’s flaws, especially in identifying people of color. Woodruff is now suing the city for false arrest.[AI generated]

:quality(70):focal(1898x693:1908x703)/cloudfront-eu-central-1.images.arcpublishing.com/liberation/76SZR6RGGZAVBISA37ADM6PKL4.jpg)

:max_bytes(150000):strip_icc():focal(749x0:751x2)/porcha-woodruff-080723-tout-6bf19437abde4ecf9422dfcd48082a0d.jpg)