The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

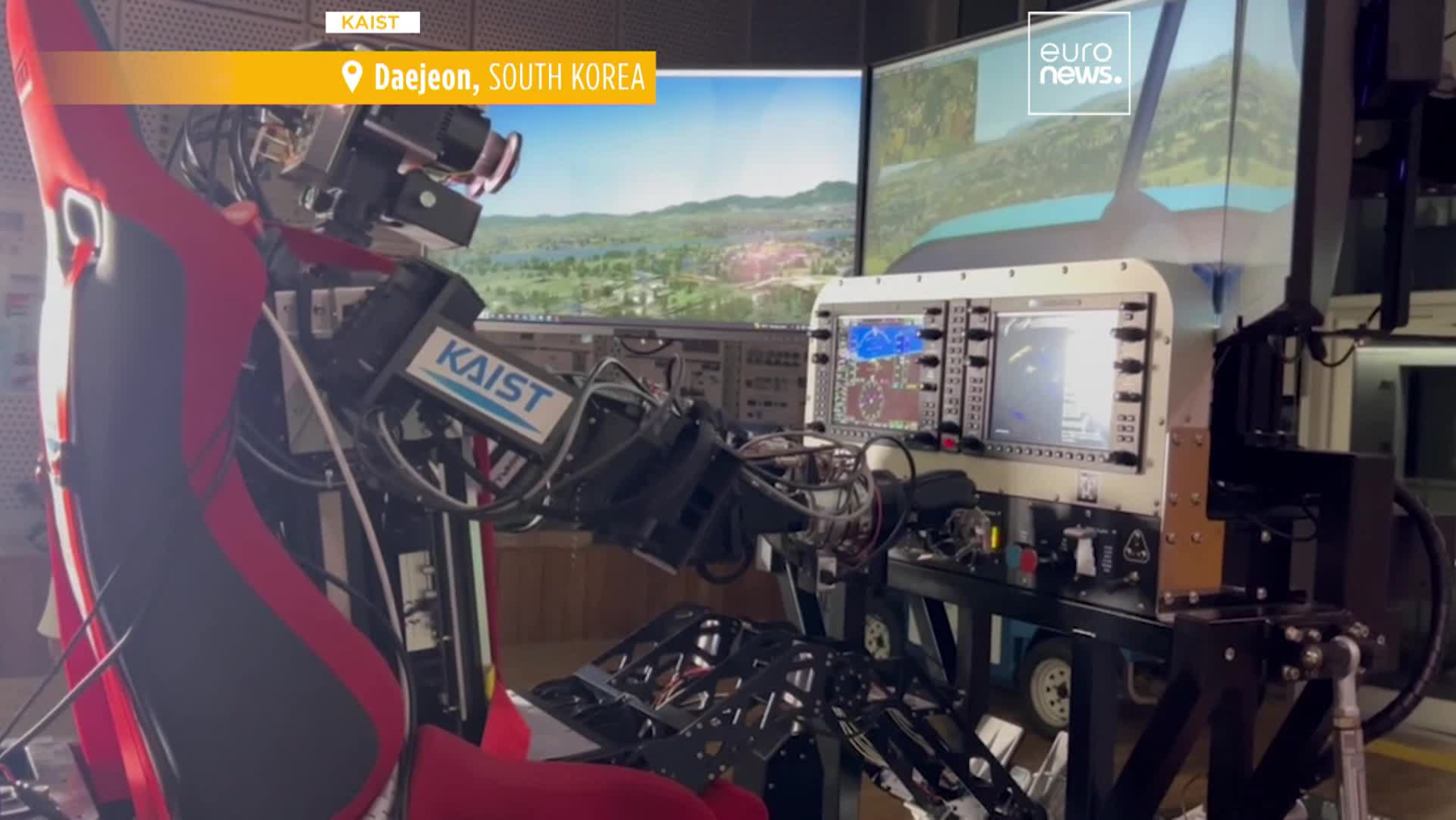

Researchers at KAIST have developed Pibot, a humanoid robot powered by AI and large language models, capable of piloting aircraft without cockpit modifications. While no incidents have occurred, its deployment in safety-critical aviation and military roles poses credible future risks if the AI malfunctions or is misused.[AI generated]

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/metroworldnews/I7F74DJ5TNAT5OIZKRFMSNBNKI.jpg)