The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

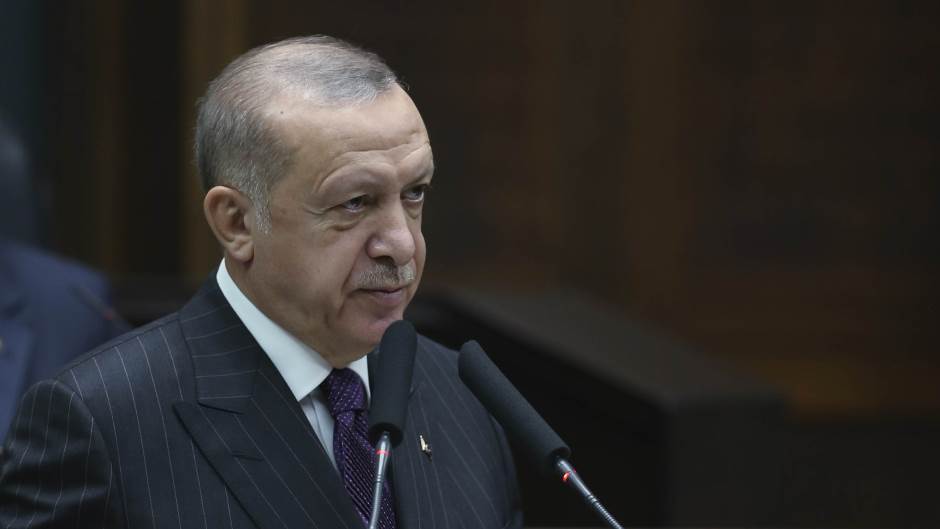

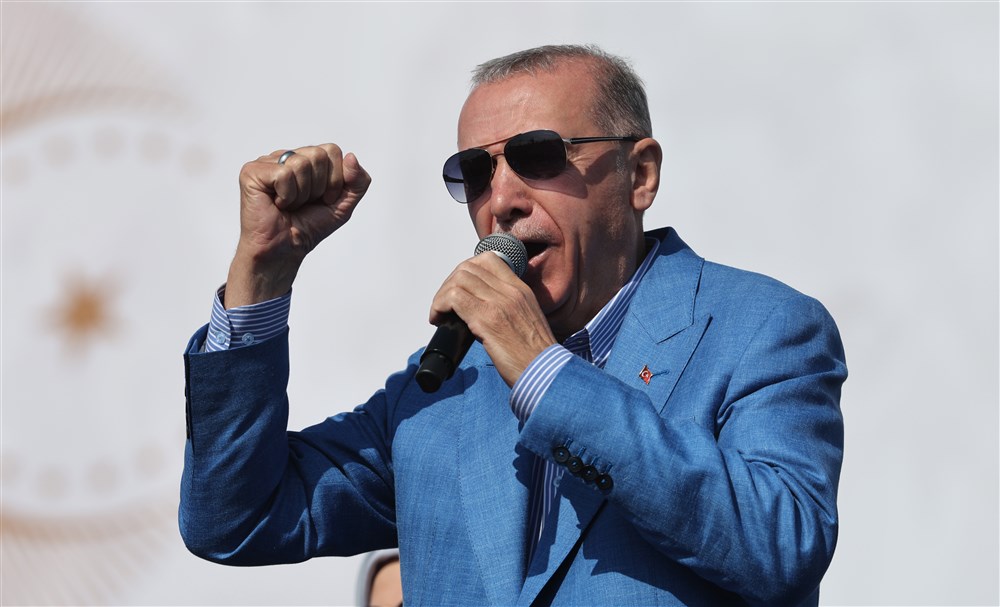

A fraudster used AI to clone President Erdoğan's voice, making deceptive calls to businesspeople and senior officials for financial gain. The scheme, involving over 10 phone lines, was uncovered by Turkey's National Intelligence Organization (MİT), which identified and apprehended the suspect. Authorities warn of increasing AI-driven identity fraud.[AI generated]