The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

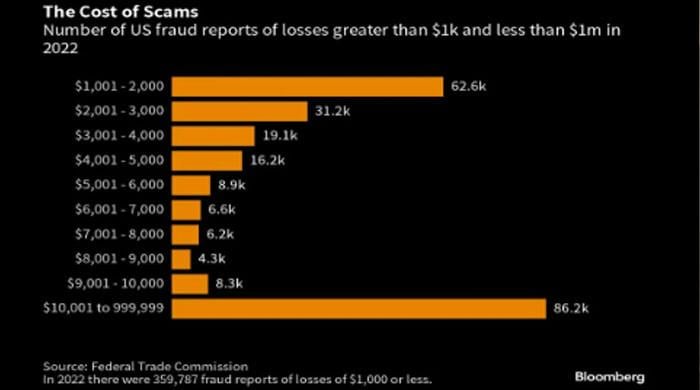

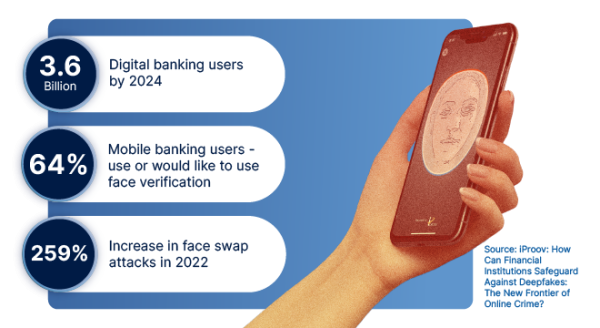

Criminals are using AI-generated deepfake voices and synthetic images to perpetrate imposter scams, deceiving victims and bypassing security systems. This has led to a surge in financial fraud, with US consumers losing $8.8 billion in a year, alarming regulators and the financial industry.[AI generated]