The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

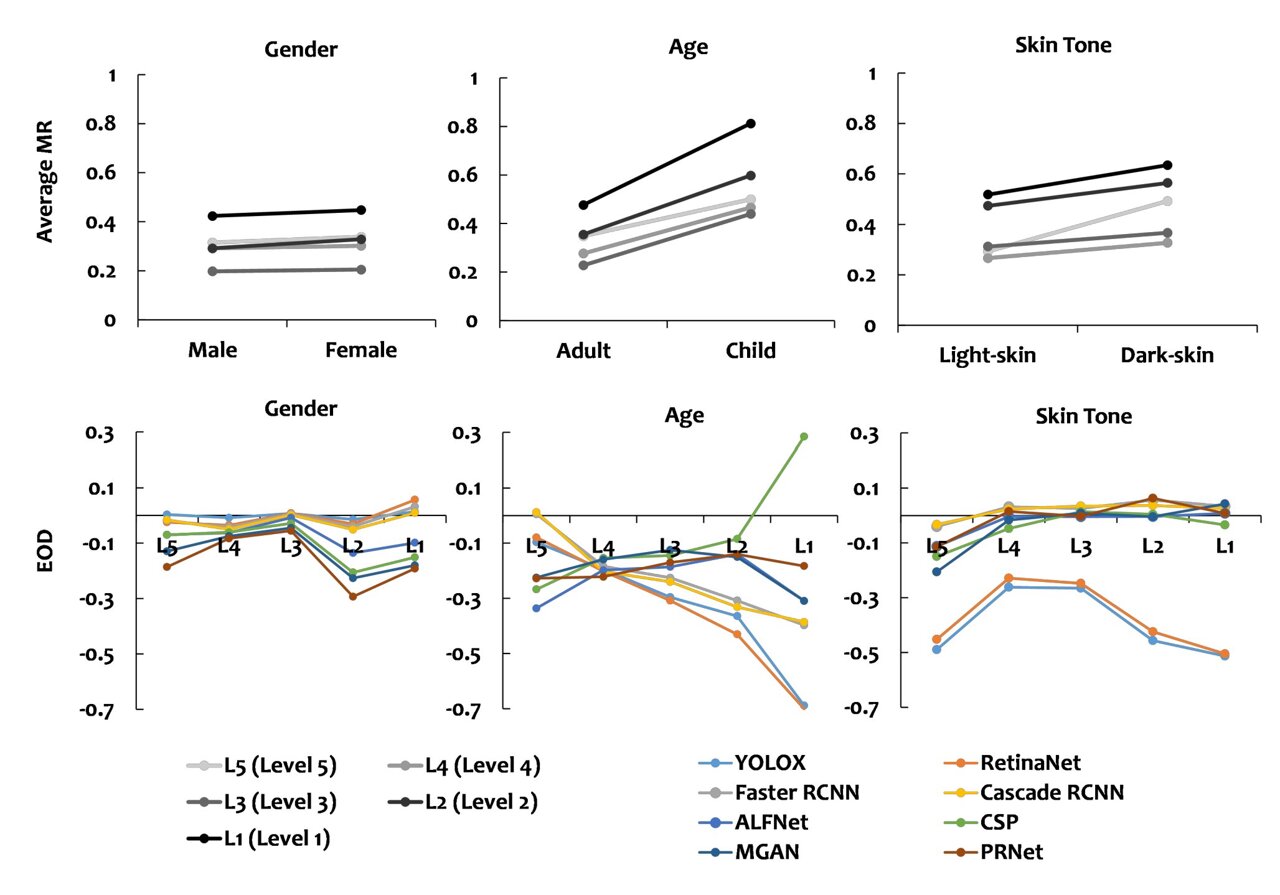

Researchers from King's College London and Peking University found that AI pedestrian detection systems used in autonomous vehicles are significantly less accurate at identifying children and dark-skinned individuals, especially in low-light conditions. This bias increases safety risks for these groups, highlighting a critical flaw in current AI training data and system design.[AI generated]