The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

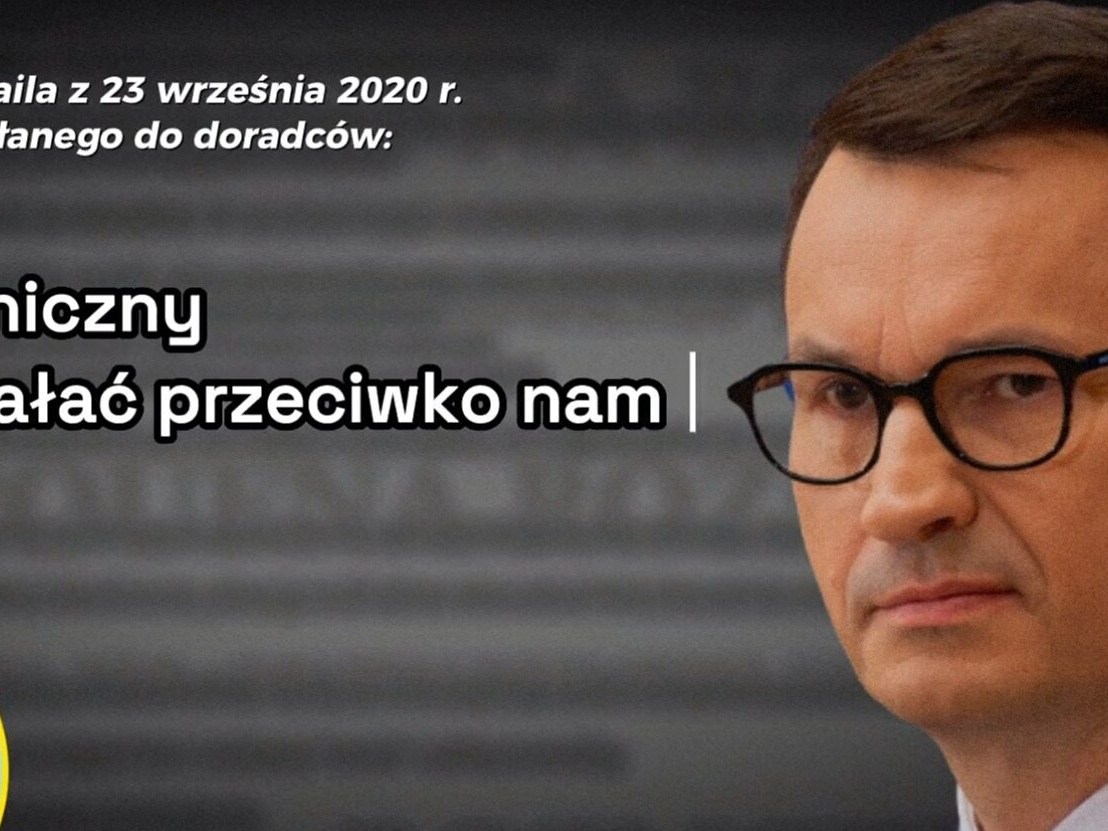

Platforma Obywatelska released a political ad using AI to generate a synthetic voice of Prime Minister Mateusz Morawiecki, reading alleged leaked emails without clear disclosure. The deepfake audio, initially unlabeled, misled viewers and sparked concerns about misinformation, deepfake risks, and harm to public trust in democratic processes.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly states that the opposition party used AI to generate a fake voice of the Prime Minister in a political ad without clarifying it was synthetic, which is a direct use of AI technology leading to misinformation and deception. This can be reasonably inferred to cause harm to the community by spreading false information and undermining democratic processes. Therefore, this qualifies as an AI Incident due to the realized harm from the AI system's use in generating misleading content that deceives the public.[AI generated]