The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

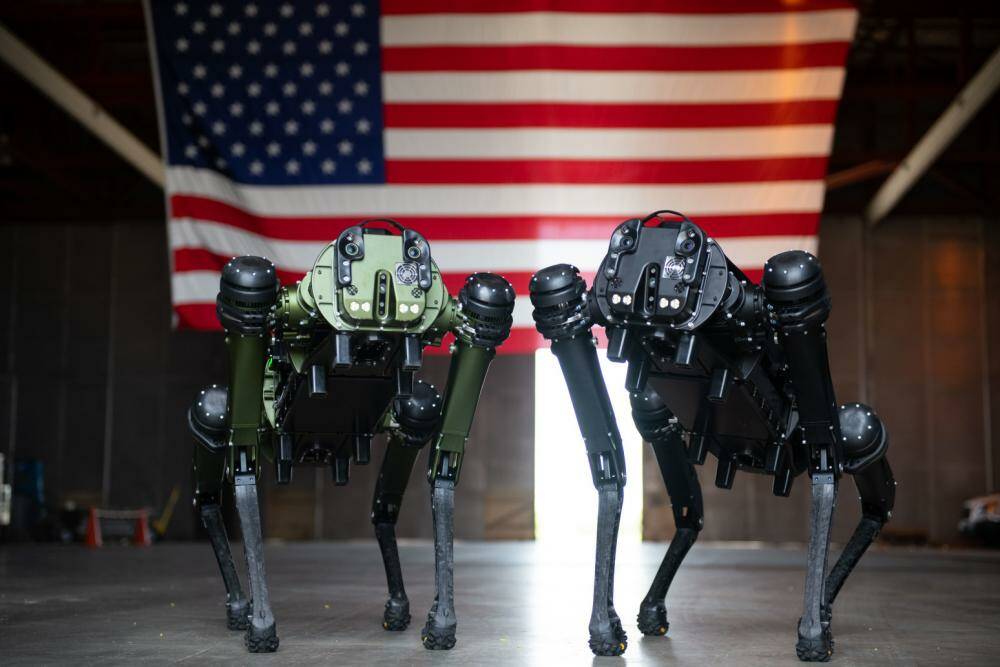

The US Army is experimenting with mounting advanced rifles on AI-enabled quadruped robots, such as Ghost Robotics' Vision 60, to enhance combat capabilities. While these weaponized robot dogs are not yet deployed, their development raises significant ethical and safety concerns about potential future harm from autonomous or semi-autonomous armed AI systems.[AI generated]