The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

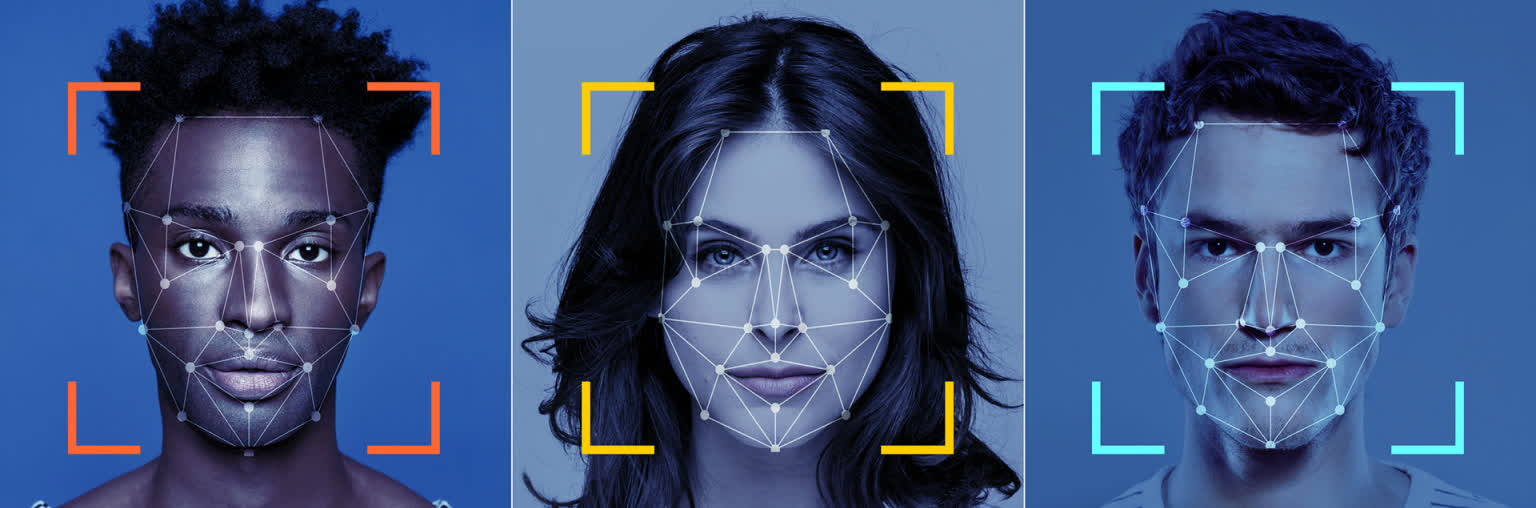

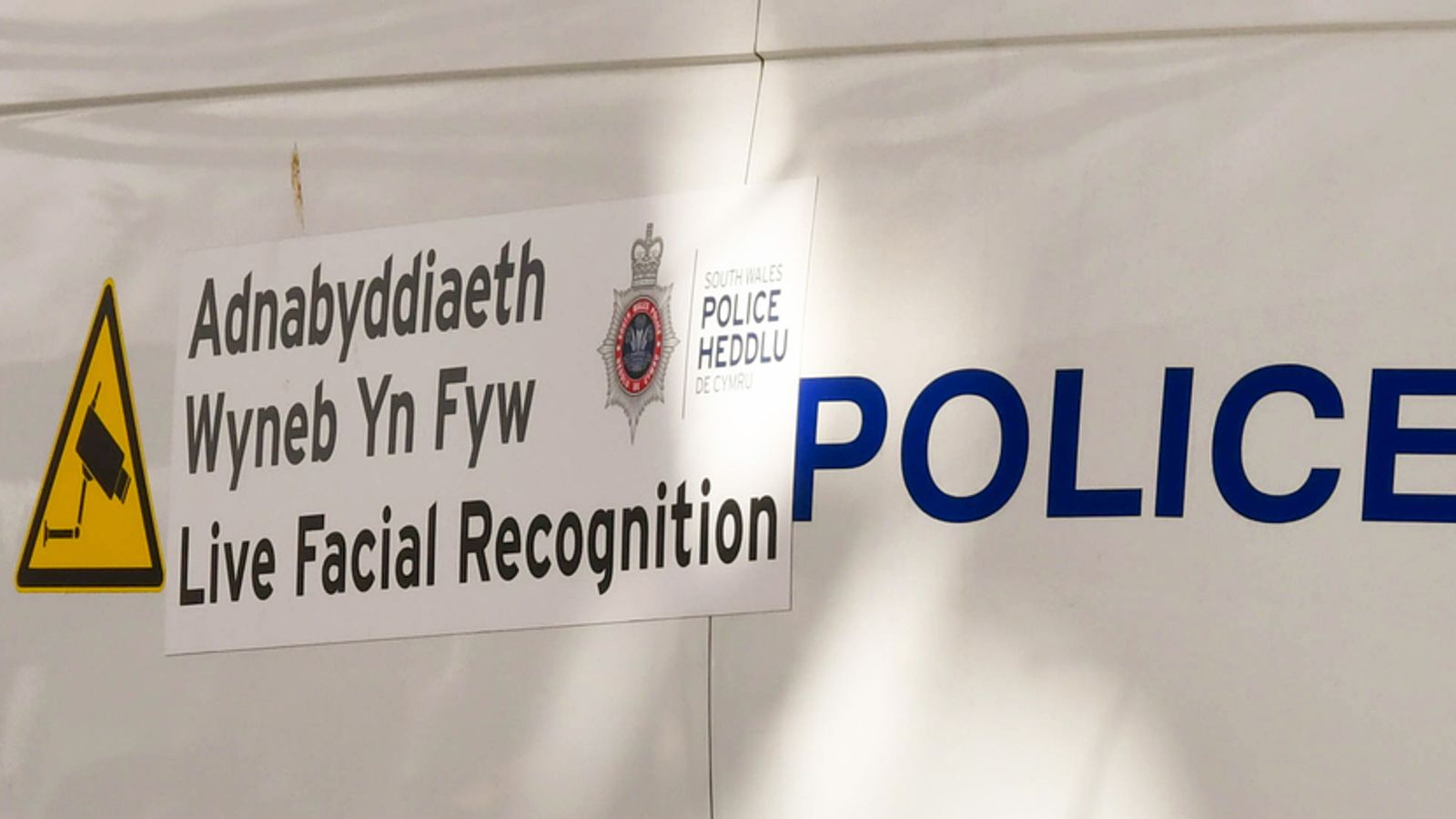

The UK government and Ministry of Defence are seeking to expand AI-based facial recognition in policing and security, soliciting industry proposals for national deployment. This move has sparked criticism from privacy advocates and rights groups over risks of bias, mass surveillance, and potential human rights violations, though no direct harm has yet occurred.[AI generated]

/cdn.vox-cdn.com/uploads/chorus_asset/file/12322323/acastro_180730_1777_facial_recognition_0001.jpg)