The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

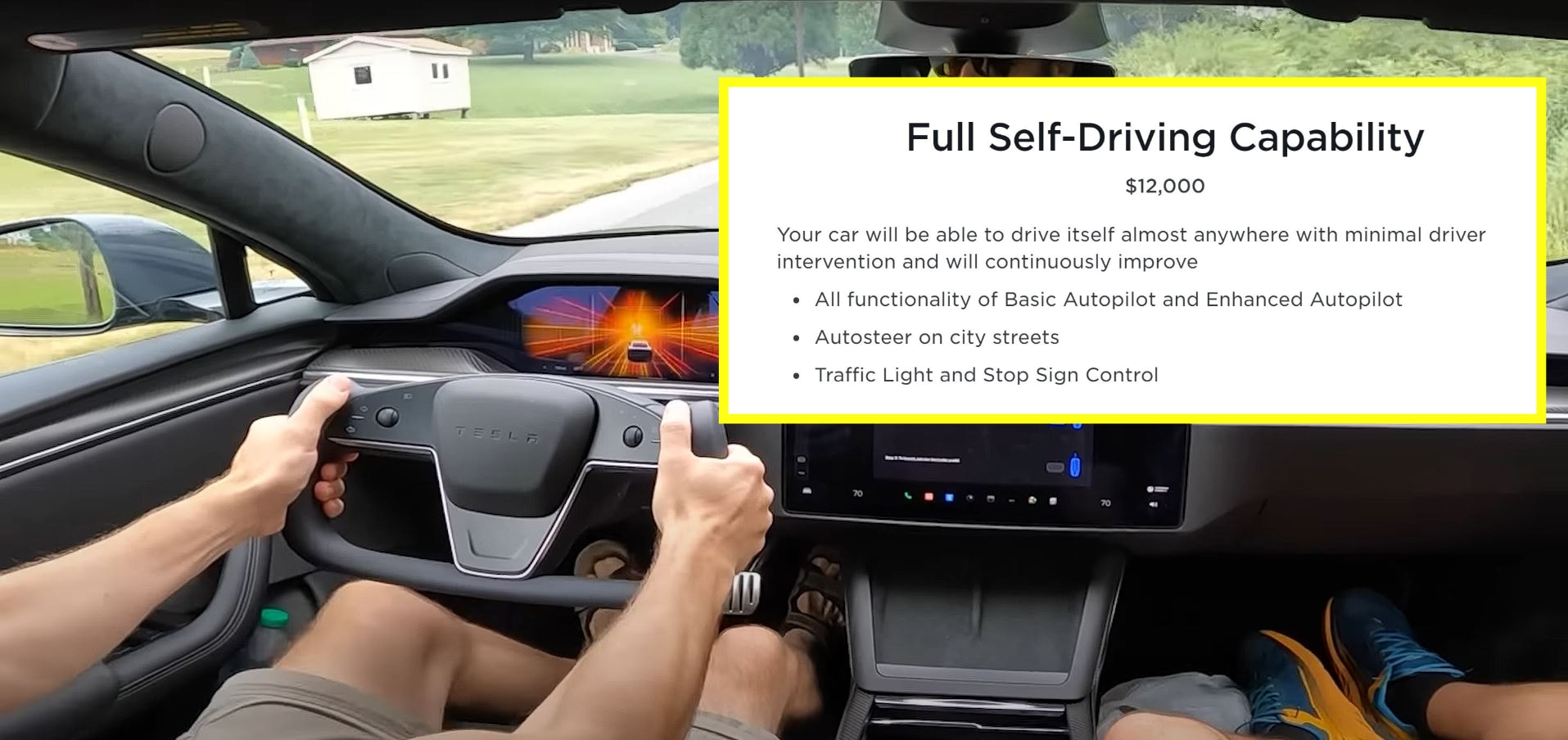

Fred Lambert, editor-in-chief of Electrek, reported that Tesla's Full Self-Driving (FSD) Beta software (v11.4.7) nearly caused two high-speed crashes by aggressively steering his Model 3 toward a highway median. The AI system's malfunction posed a direct risk to driver safety, highlighting ongoing concerns about autonomous vehicle reliability.[AI generated]