The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

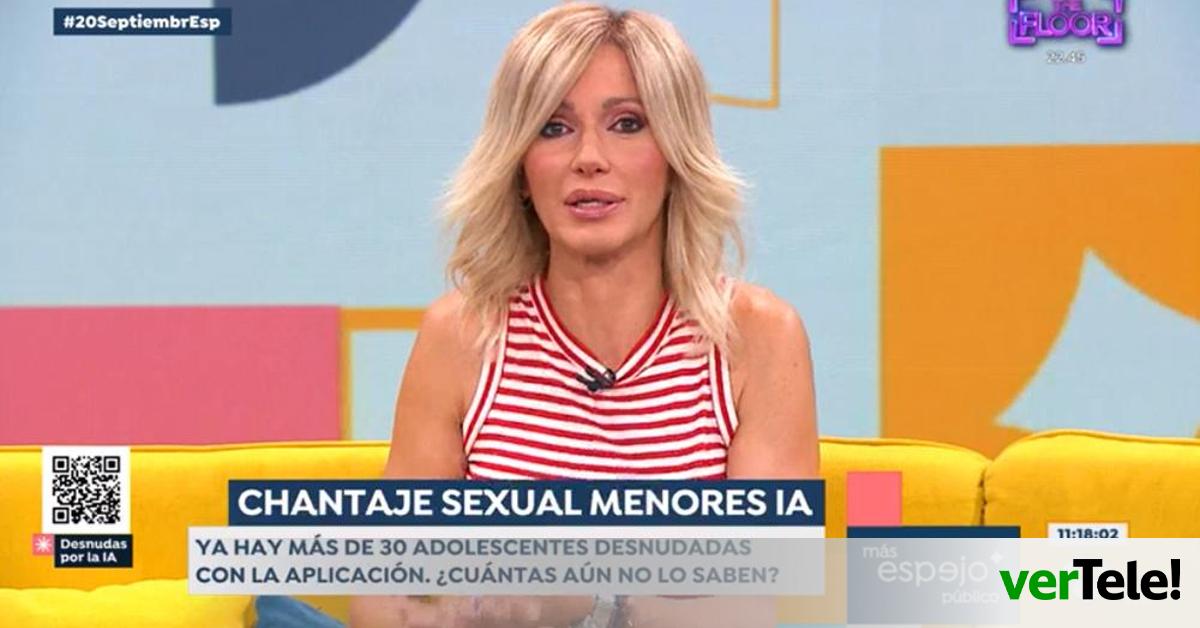

AI systems were used to create and disseminate manipulated pornographic images of TV presenter Susanna Griso and numerous minors in Spain, violating their rights and causing emotional harm. The deepfake images, generated without consent, highlight the growing misuse of AI for sexual exploitation and privacy violations.[AI generated]