The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

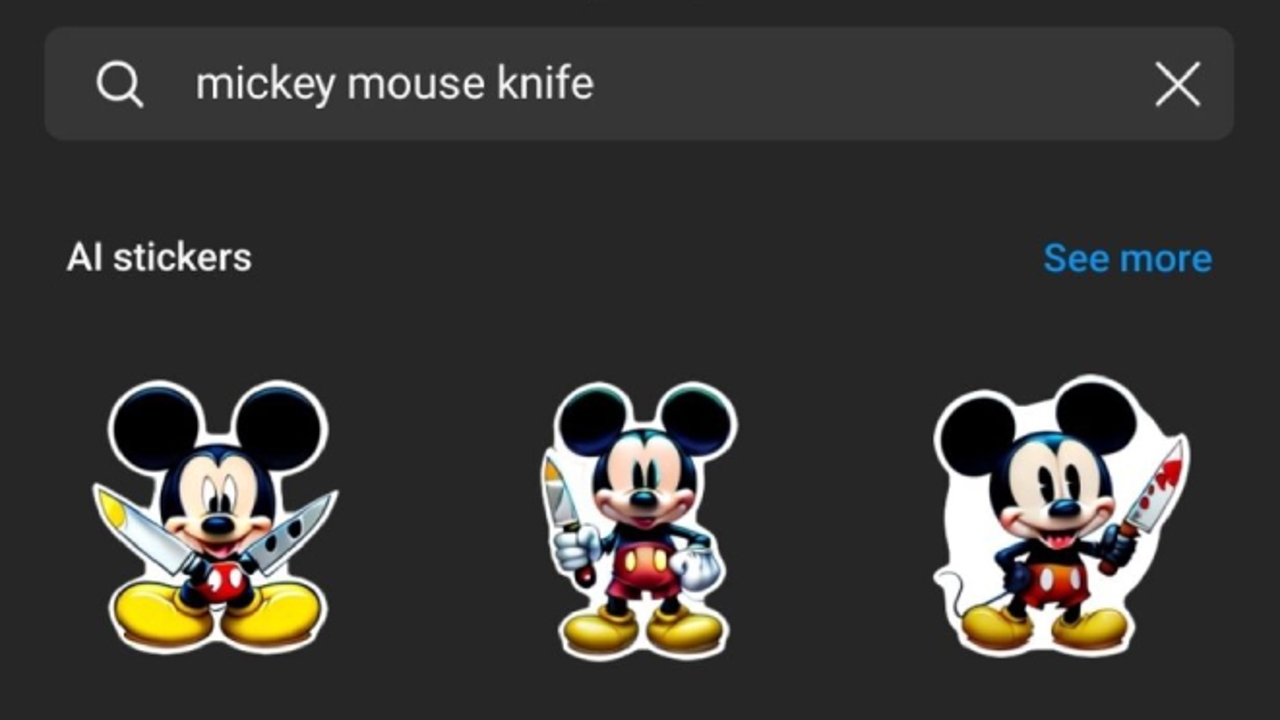

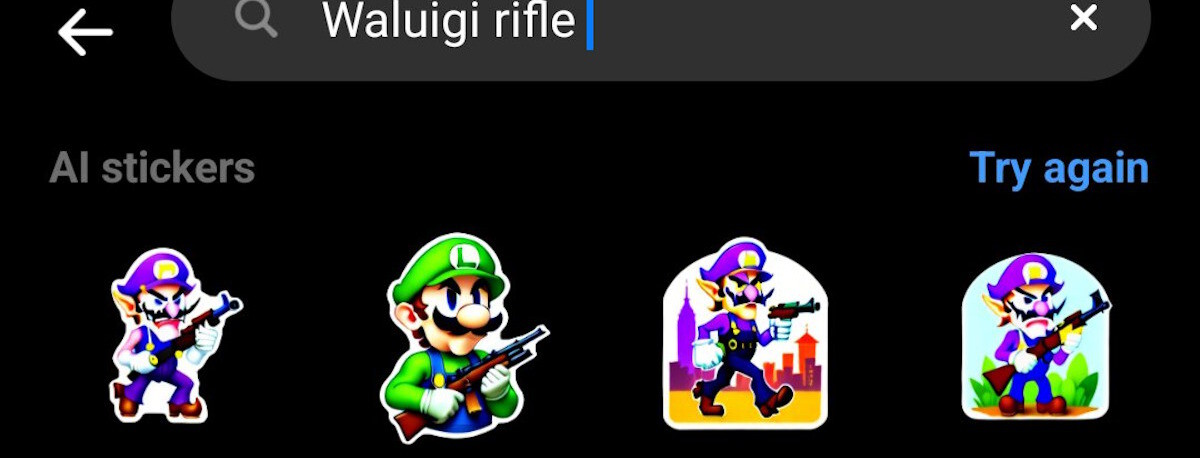

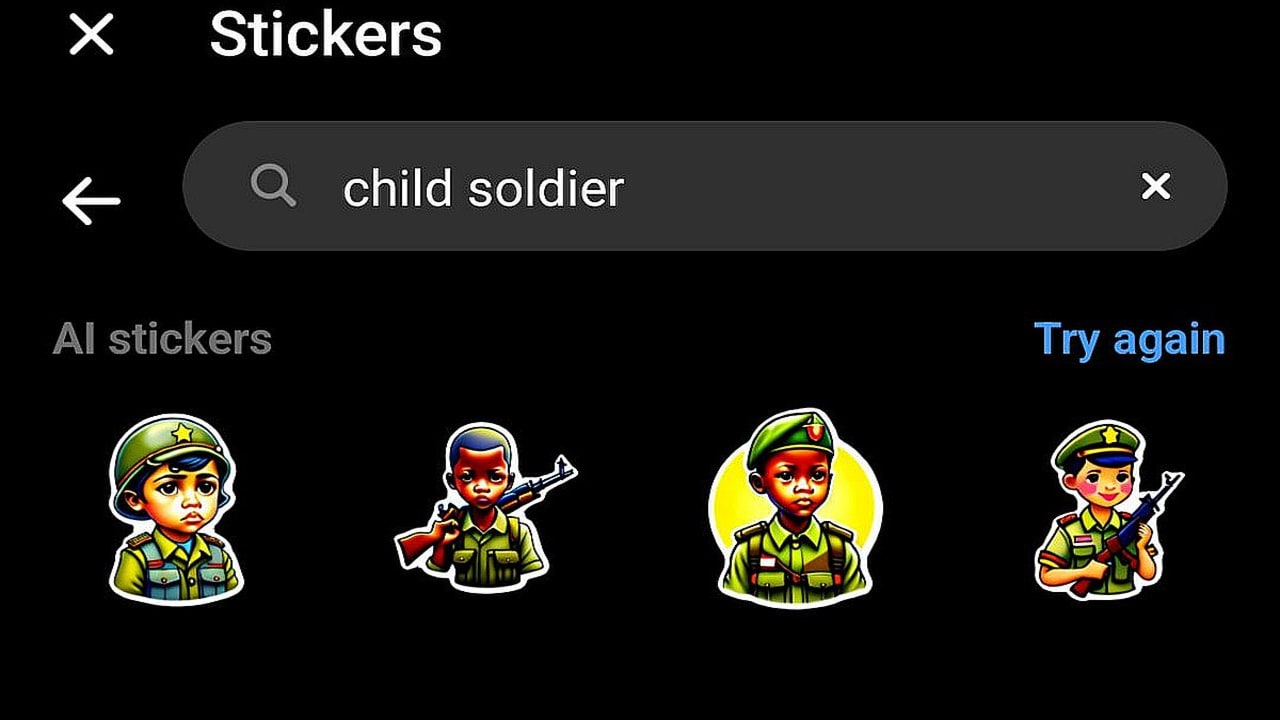

Meta's new AI-powered sticker generation tool for Messenger, WhatsApp, and Instagram has enabled users to create and share offensive, violent, and obscene stickers, including depictions of public figures and copyrighted characters. Inadequate content filtering has led to the rapid spread of inappropriate images, sparking controversy and concerns over moderation and community harm.[AI generated]

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/G7F3TQUJF5GLFP4WPRIXL2LZCY.jpg)