The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

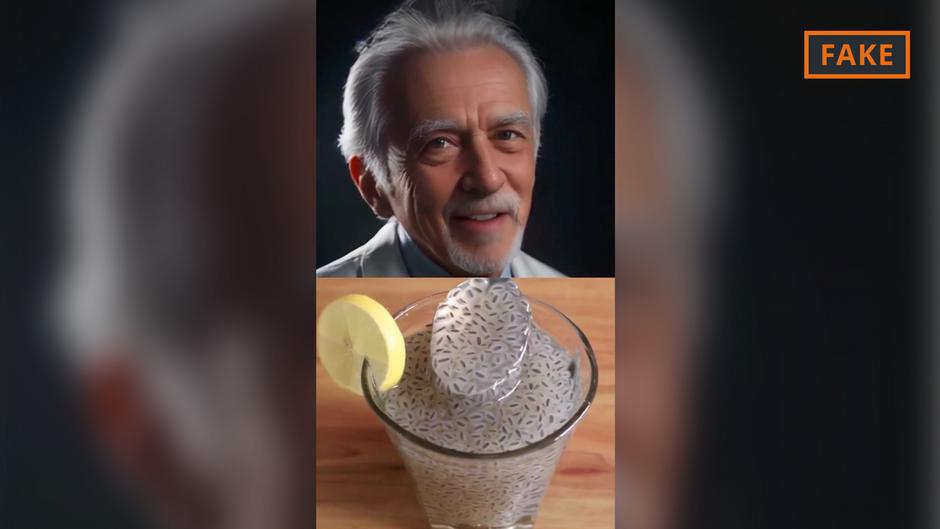

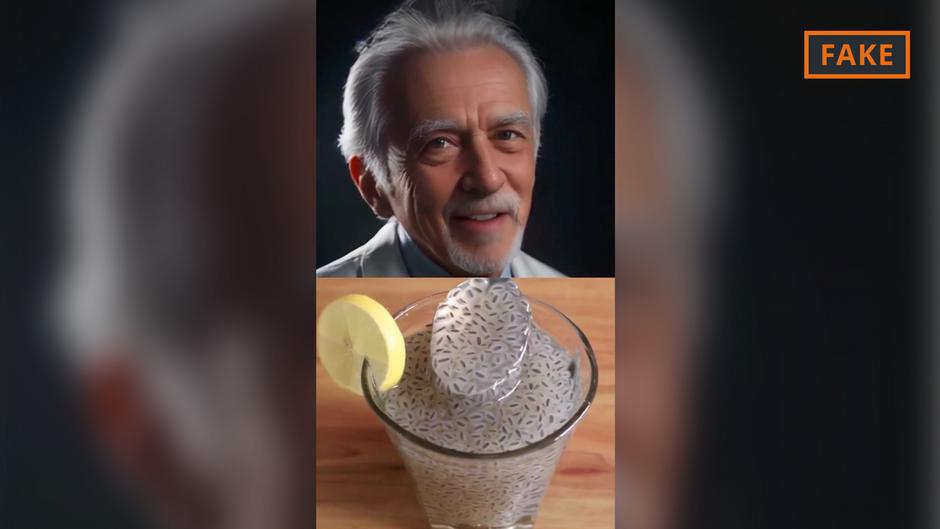

AI-generated bots impersonating doctors have spread false medical advice, such as misleading claims about chia seeds curing diabetes, to millions on social media. These videos, viewed and shared widely, risk public health by exploiting trust in medical professionals and disseminating inaccurate health information.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves AI systems generating fake doctor personas and false medical claims on social media, which directly leads to harm by spreading misinformation that can negatively affect people's health decisions. The AI-generated content impersonates authoritative medical figures, increasing the risk of harm. This fits the definition of an AI Incident because the AI system's use has directly led to harm to communities and health (harms a and d).[AI generated]