The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

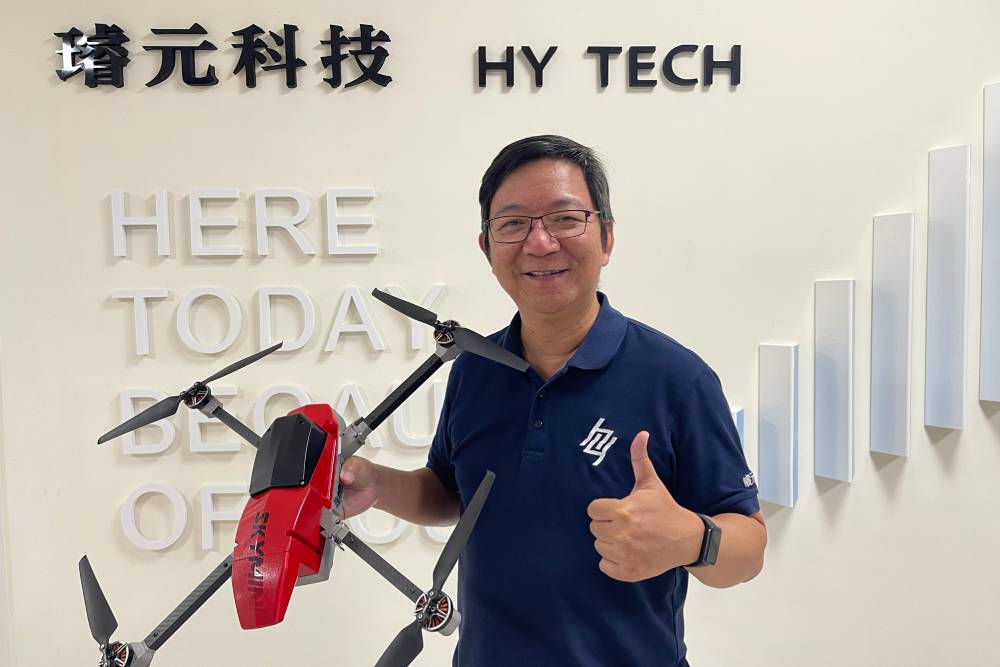

Taiwan's Ministry of National Defense will procure 1,779 military drones from local firms, integrating Acer's AI technology for autonomous object recognition, enemy identification, and self-directed flight. The AI systems, to be tested soon, aim to enhance battlefield awareness but raise concerns about potential risks in military applications.[AI generated]