The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

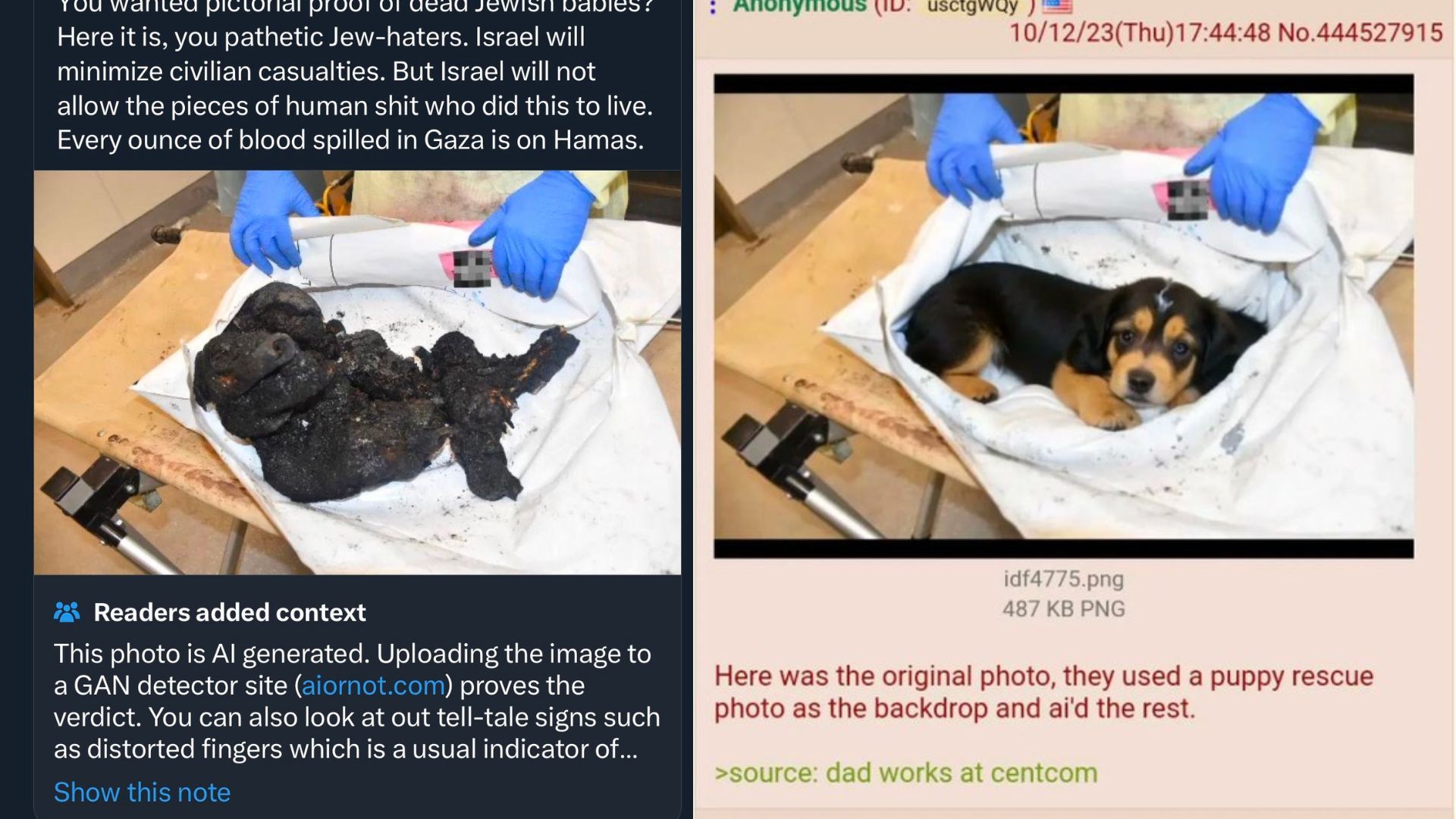

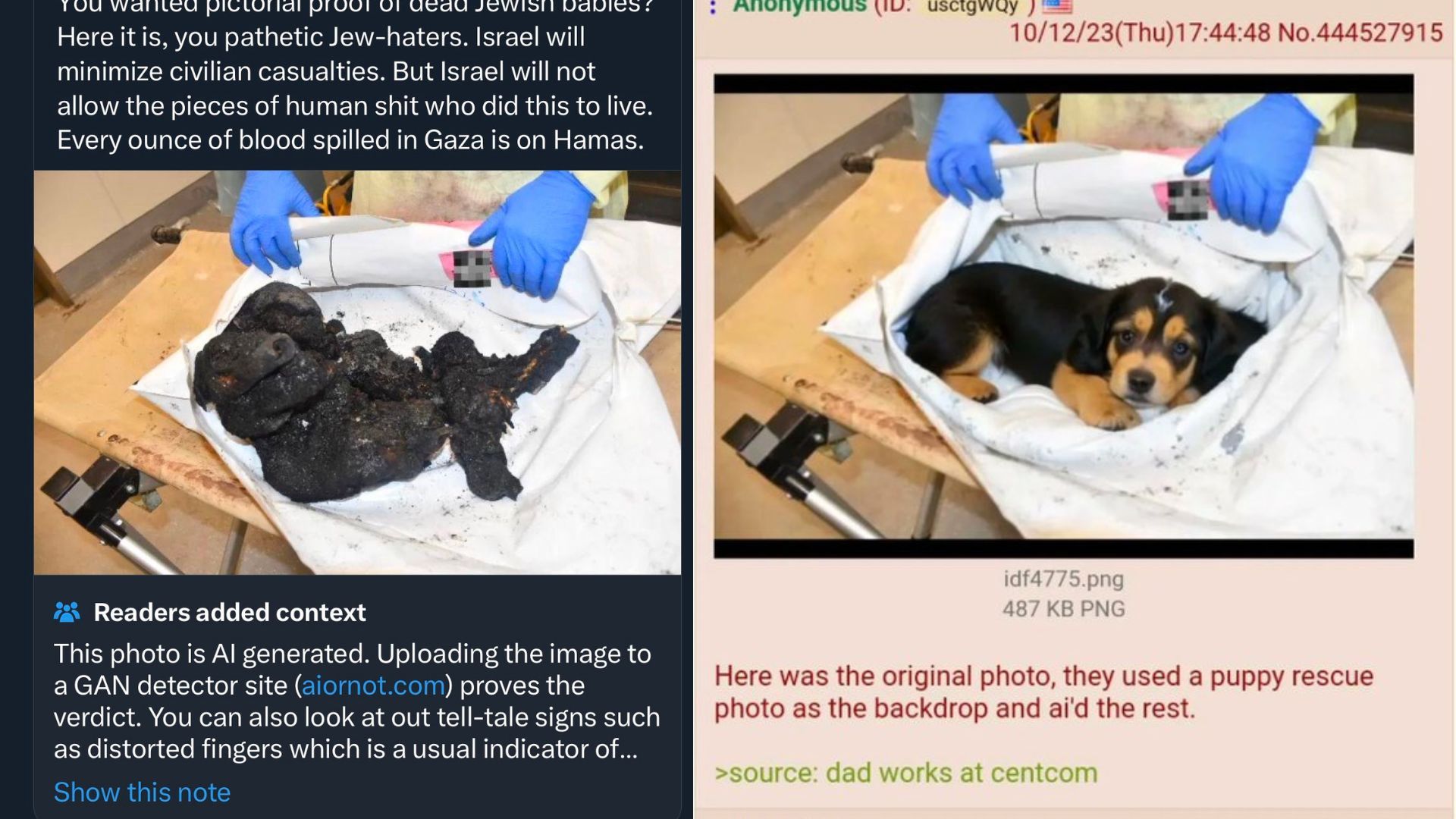

Conservative commentator Ben Shapiro shared an AI-generated image purporting to show a 'burnt Jewish baby' following a Hamas attack on Israel. The image, confirmed as fake by detection tools, was used to promote a misleading narrative, fueling misinformation and exacerbating tensions during the conflict.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of an AI system to generate a graphic and misleading image that is being disseminated publicly during a conflict. This AI-generated content can cause harm to communities by spreading misinformation and inflaming tensions, which constitutes harm to communities and potentially violates rights. Since the AI-generated image has been shared and is influencing public perception, this is a realized harm linked to the use of an AI system.[AI generated]