The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

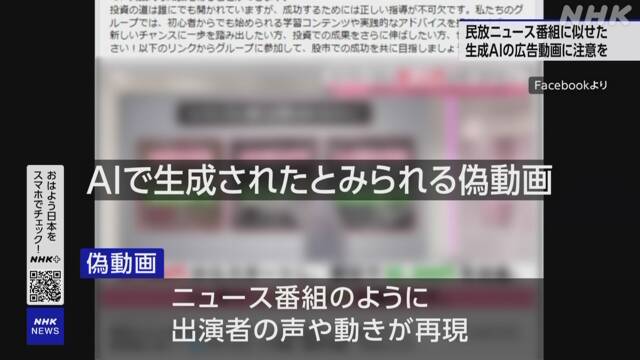

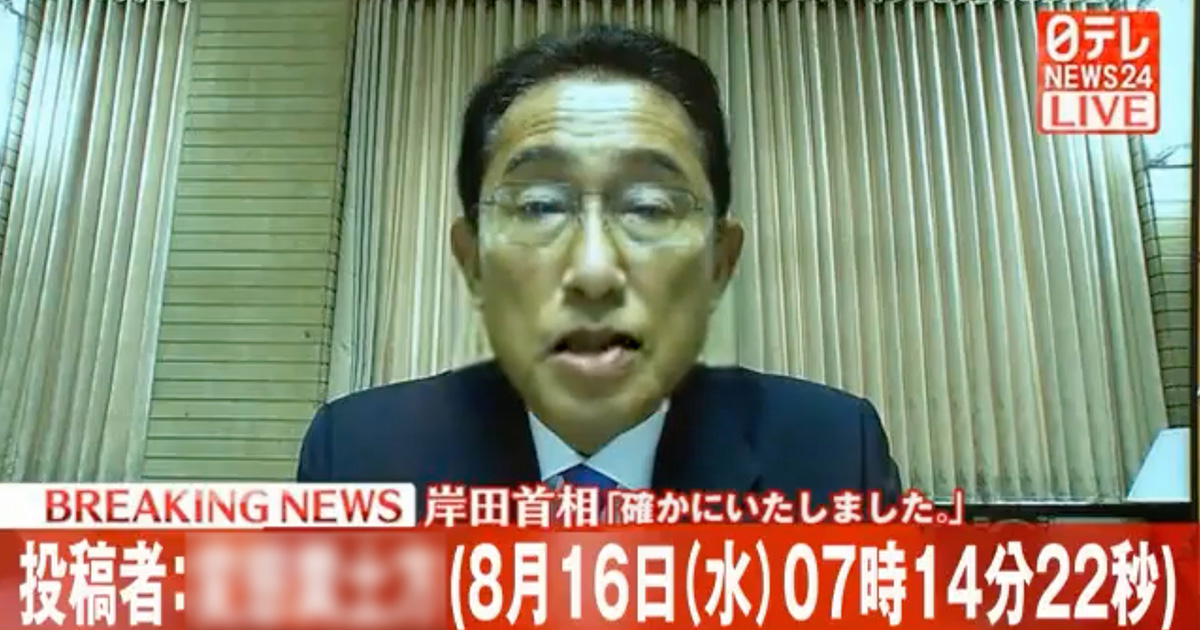

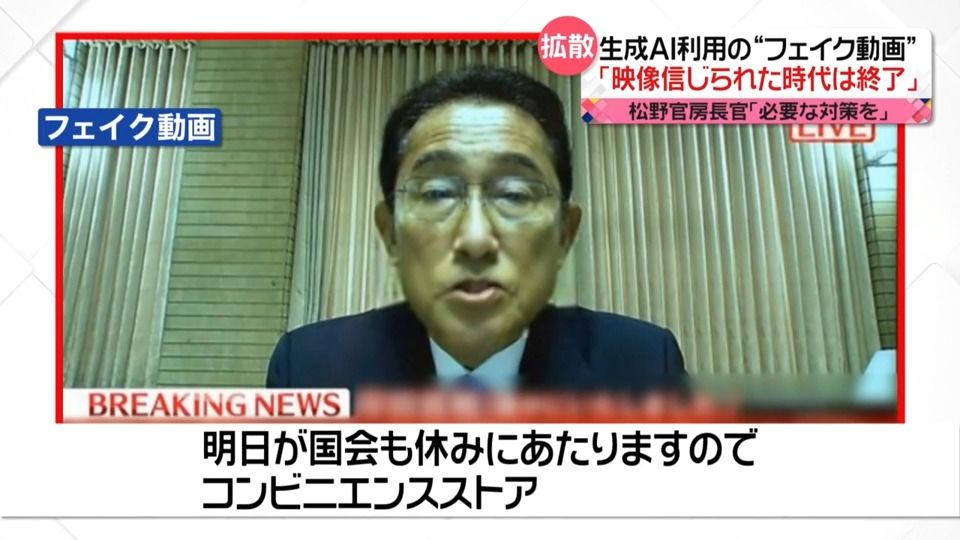

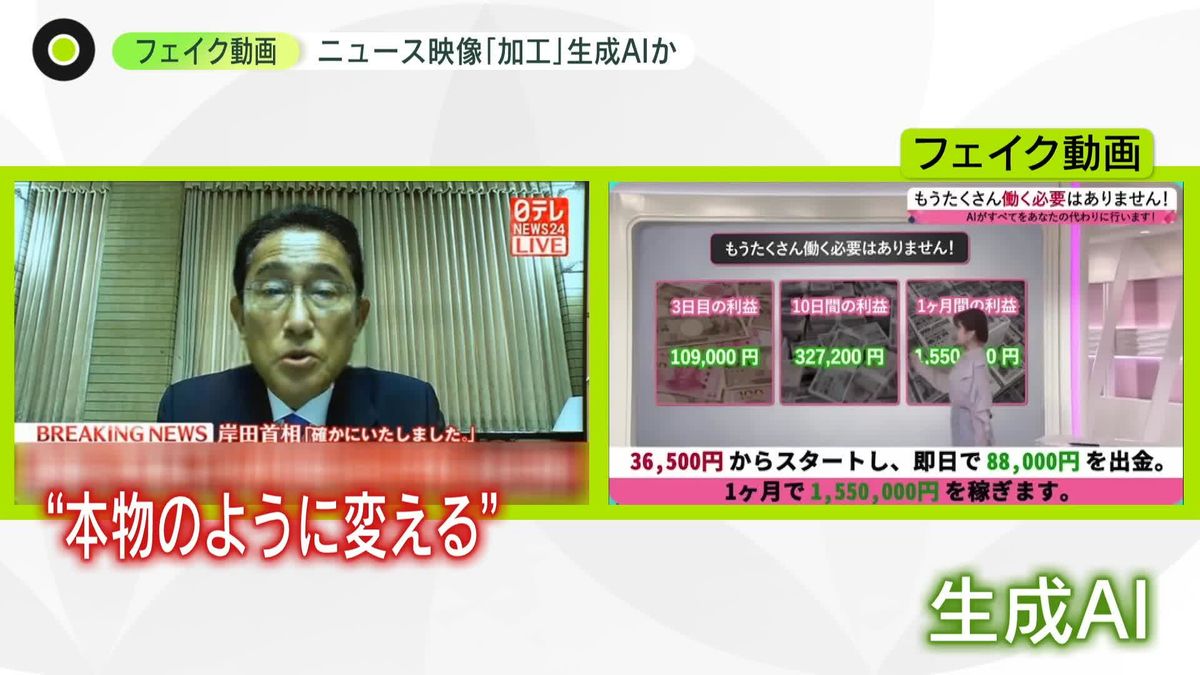

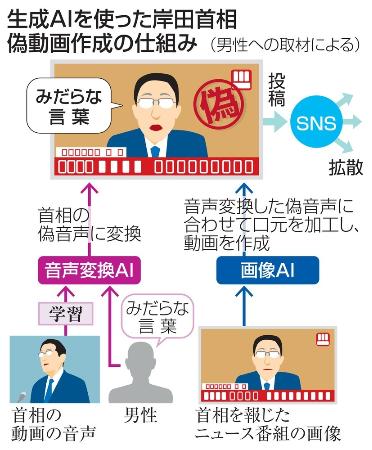

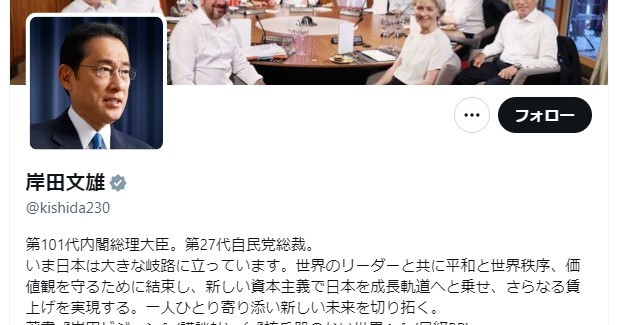

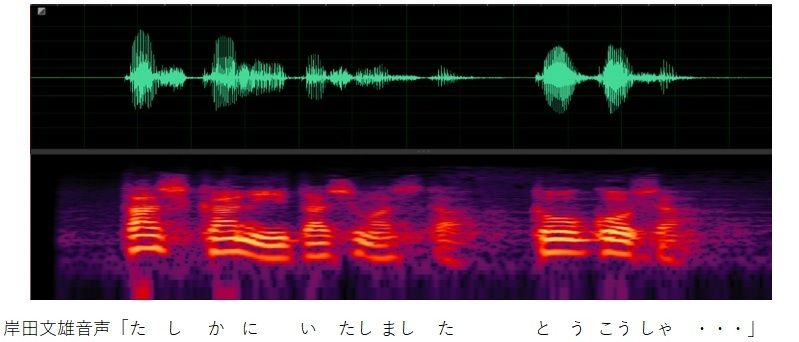

A fake video of Prime Minister Fumio Kishida, created using generative AI to mimic his voice and image and falsely display a news program's logo, was widely spread on social media. The incident caused public misinformation and reputational harm, prompting strong protests from Nippon TV and raising concerns about AI misuse.[AI generated]

:quality(50)/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/ZNOC23XYSNJ7XPEPXEFV4ILBPQ.jpg)

/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/IK2MKO644ZIXLC6LPLPDUWG4WI.jpg)

/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/3ZD4YJXZCZLFXEJYHE3OWT3V5U.jpg)

:quality(50)/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/CJ4GDXMN5ZKOPHJ6YY367GRUJ4.jpg)