The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

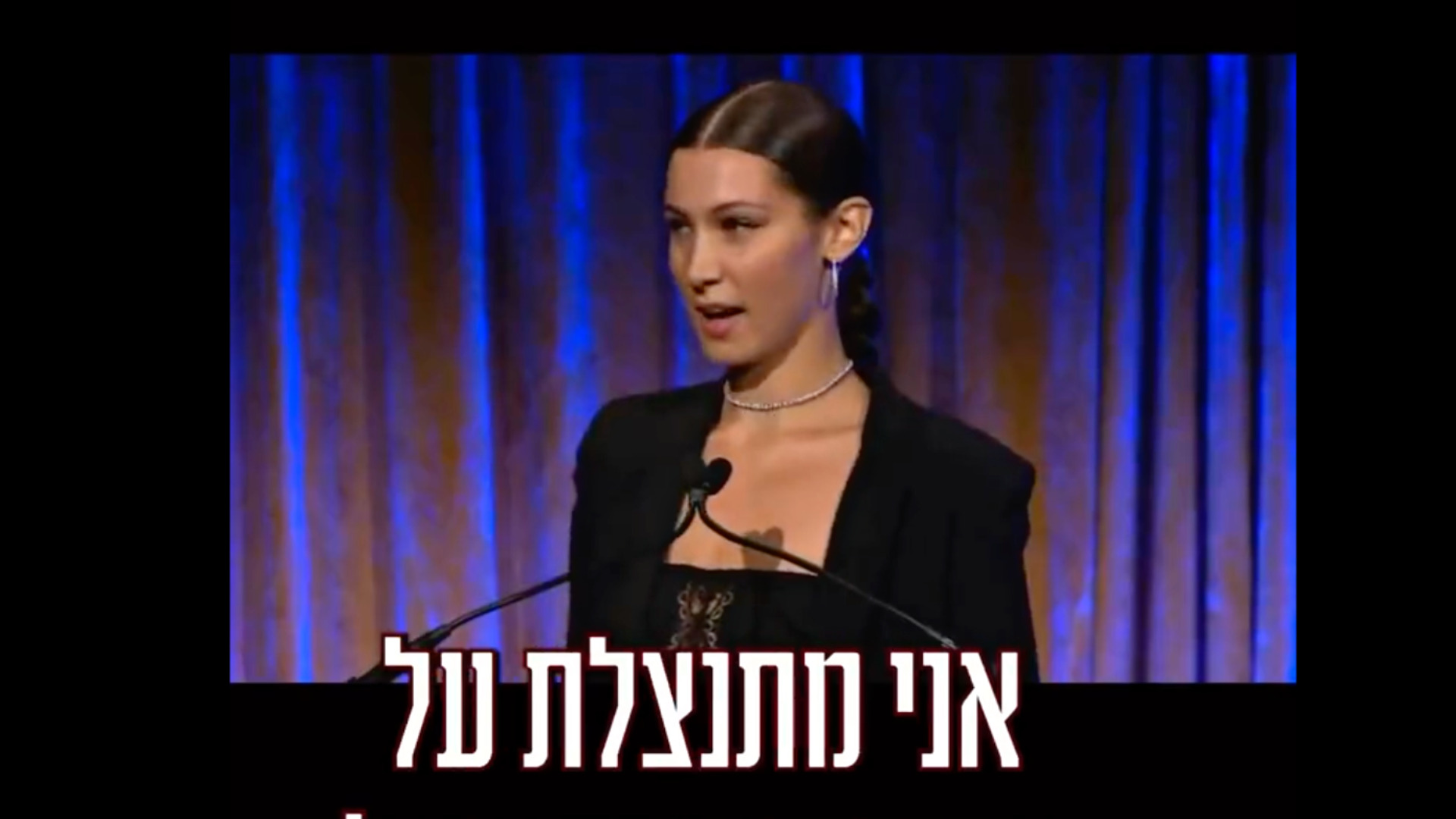

An AI-generated deepfake video falsely depicting Palestinian-American model Bella Hadid expressing support for Israel went viral, amassing around 30 million views on X (formerly Twitter). The manipulated video, created by a known producer, spread misinformation and caused public confusion and controversy, highlighting the social harm of AI-driven disinformation.[AI generated]