The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

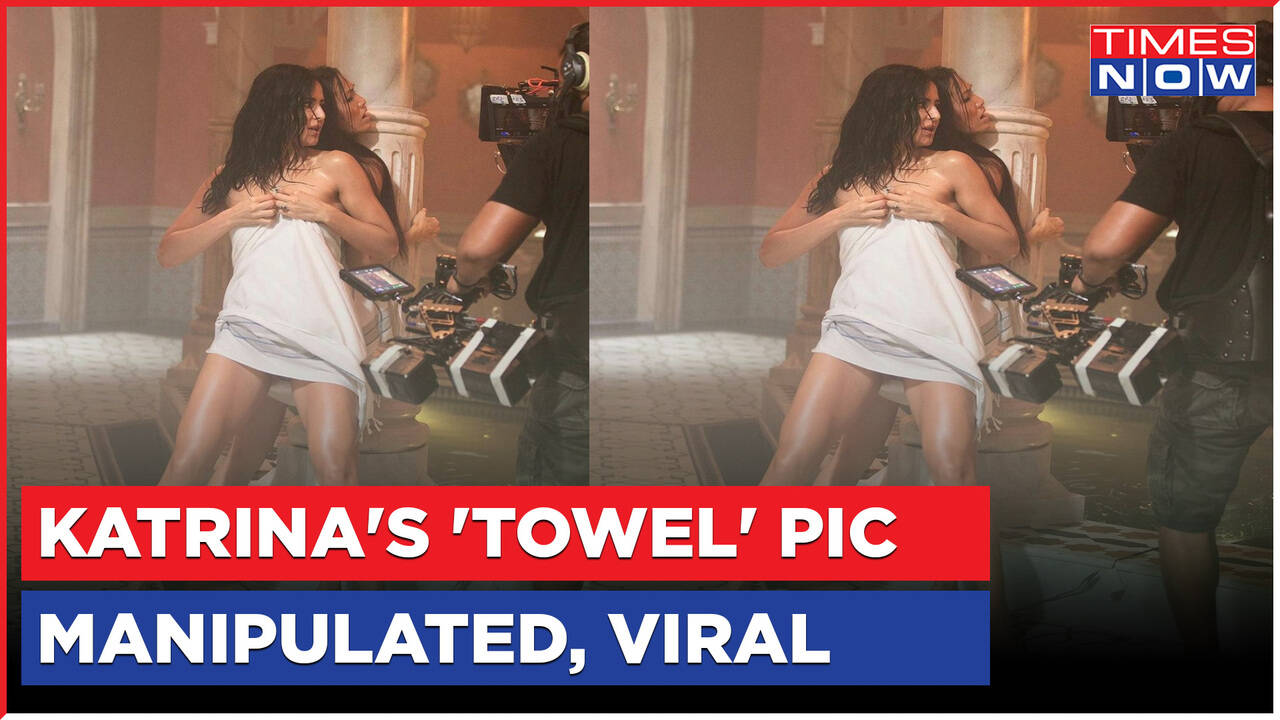

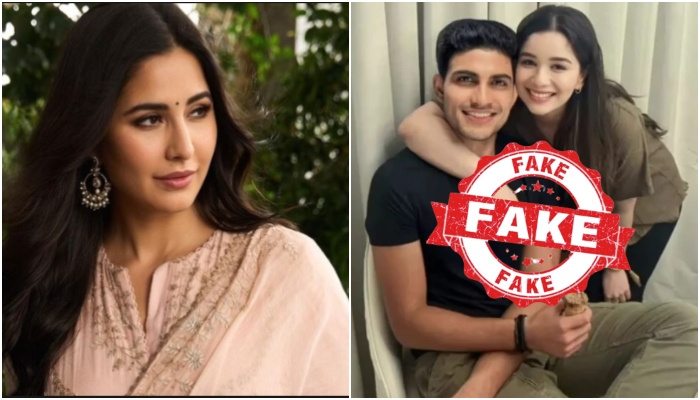

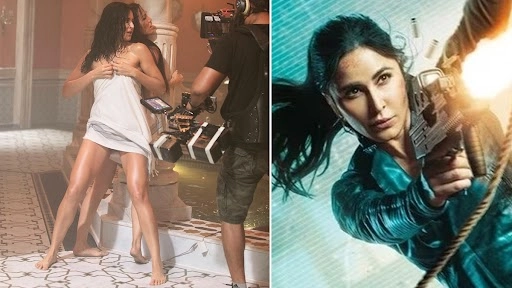

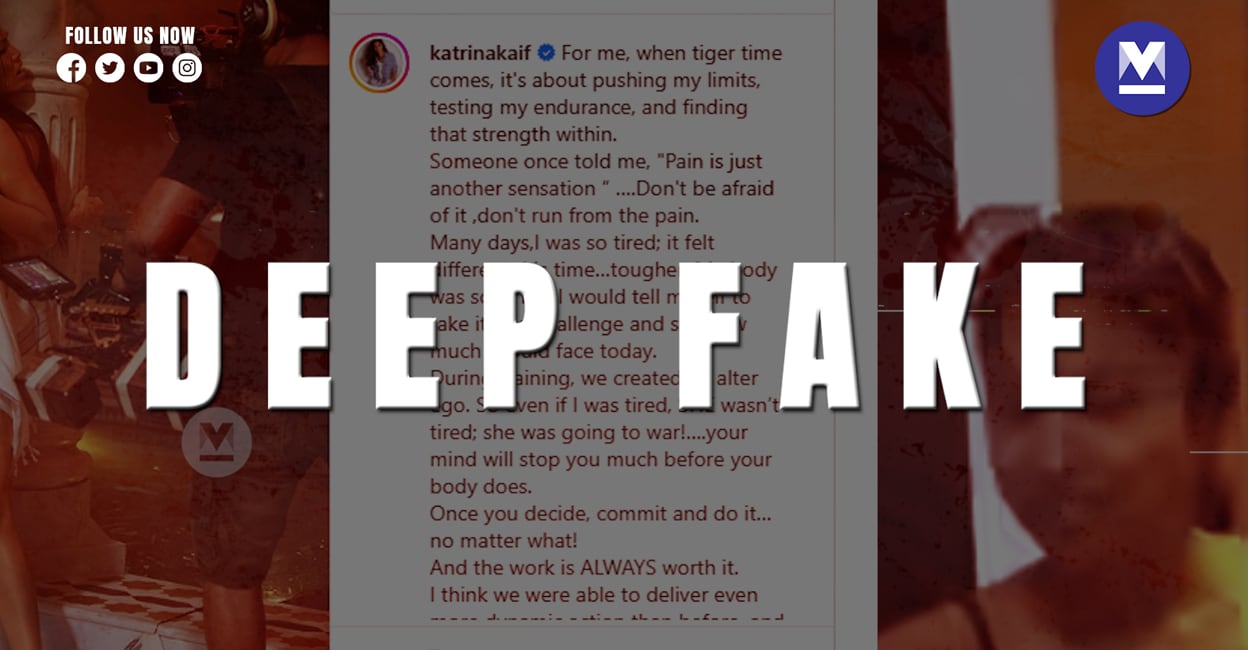

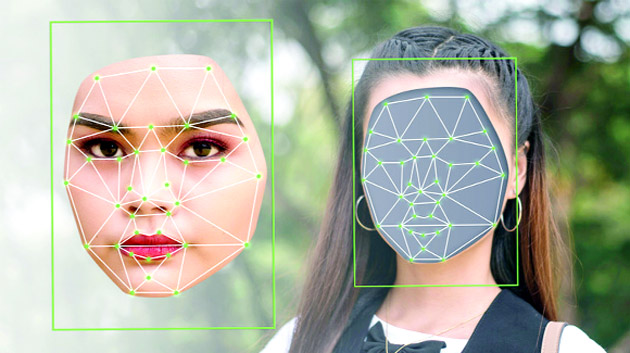

AI-powered deepfake technology was used to create and circulate explicit fake images and videos of Bollywood actresses Katrina Kaif and Rashmika Mandanna, leading to privacy violations, reputational harm, and widespread public concern. The incidents highlight the growing misuse of AI for malicious purposes and calls for regulatory action.[AI generated]