The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

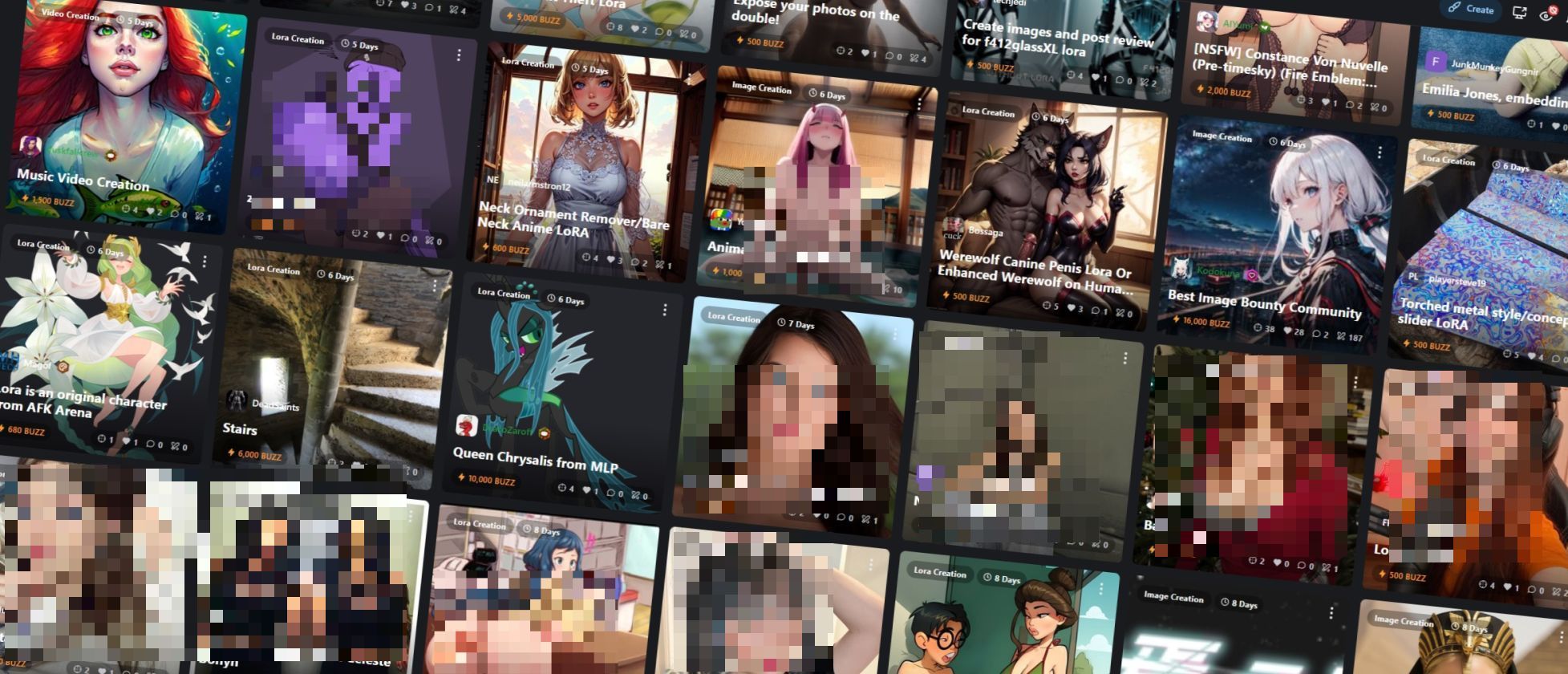

Civitai, a major AI model-sharing platform backed by Andreessen Horowitz, enables and profits from the creation of nonconsensual deepfake sexual images of real people. Its 'bounties' feature incentivizes users to generate targeted deepfake models, leading to privacy violations and harm to individuals, especially women and private citizens.[AI generated]