The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

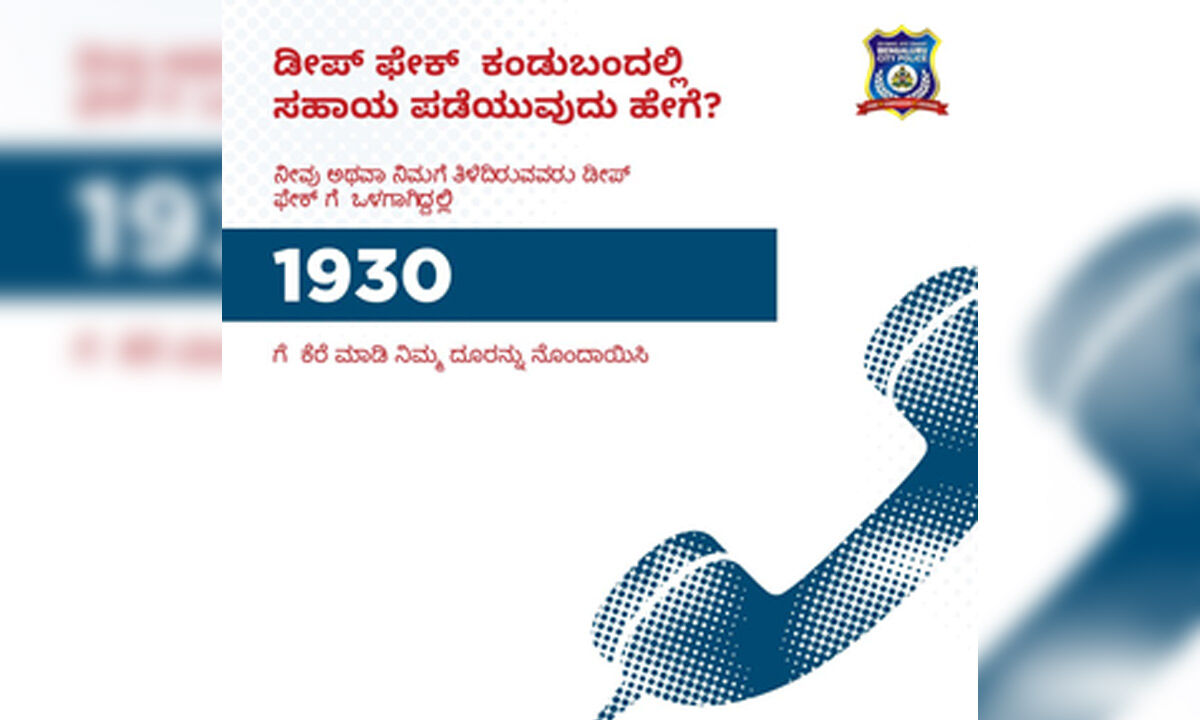

AI-generated deepfake videos featuring Indian actresses like Kajol, Rashmika Mandanna, and Katrina Kaif have gone viral, causing reputational harm and raising privacy concerns. The Indian government has urged social media platforms to remove such content, warning of penalties, while police and cybercrime units investigate the incidents.[AI generated]

1700312050169.jpg)

_2023-11-16-12-52-28_thumbnail.jpg)