The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

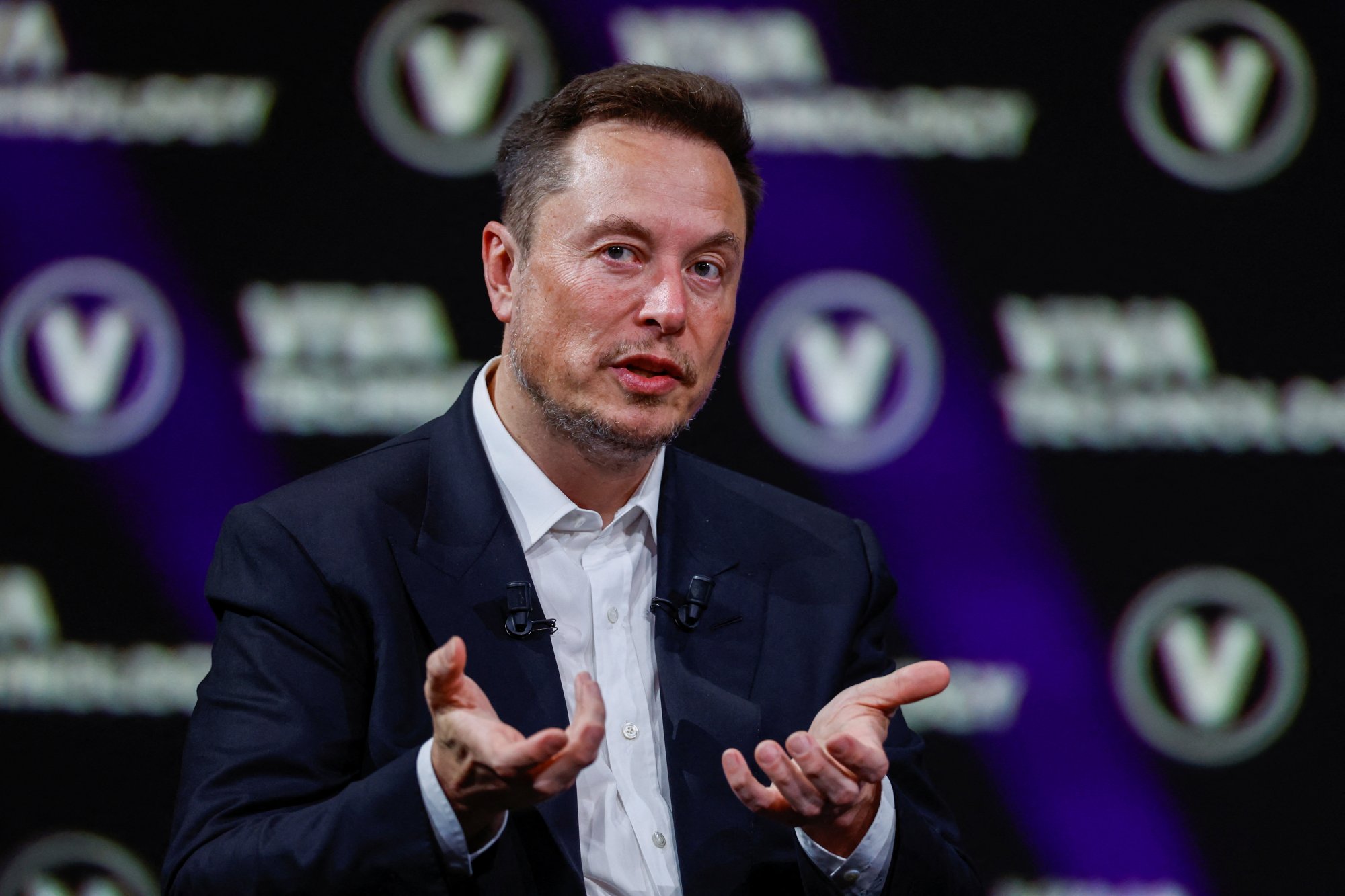

After Elon Musk's takeover of X (formerly Twitter), AI-driven content moderation has drastically declined, with 98% of hate-filled posts—including calls for violence and Holocaust denial—remaining online. Researchers found that the platform failed to remove harmful content, enabling ongoing hate speech and incitement.[AI generated]

:strip_icc()/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2023/y/Z/AQcquUQjevNARBX6VOMQ/plataforma-x-ex-twitter-bloomberg.jpg)