The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

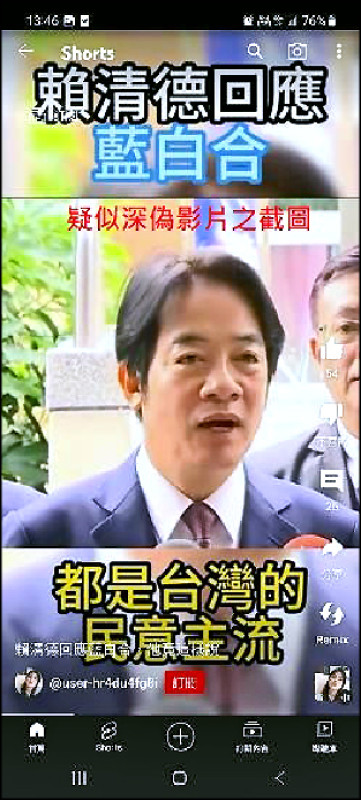

AI-generated deepfake technology was used to create and spread a manipulated video falsely depicting Taiwanese presidential candidate Lai Ching-te endorsing political rivals. The video, widely circulated on social media, aimed to mislead voters and influence election outcomes, prompting authorities to launch a criminal investigation and warn the public about deepfake risks.[AI generated]