The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

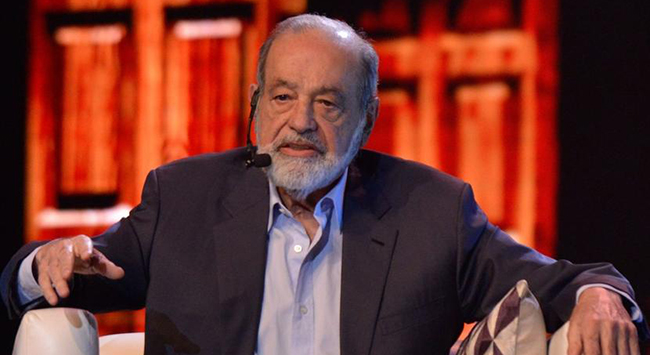

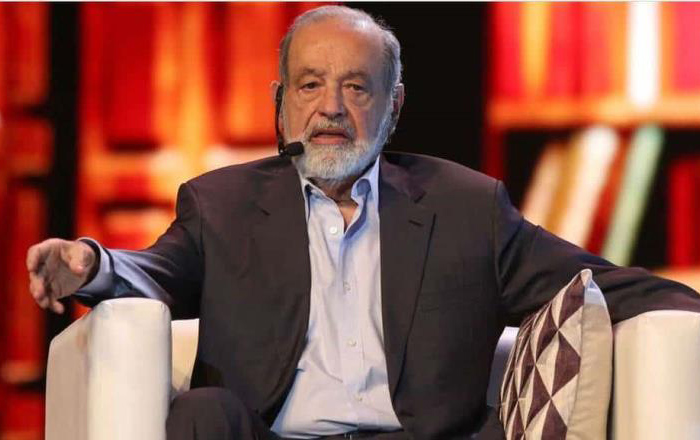

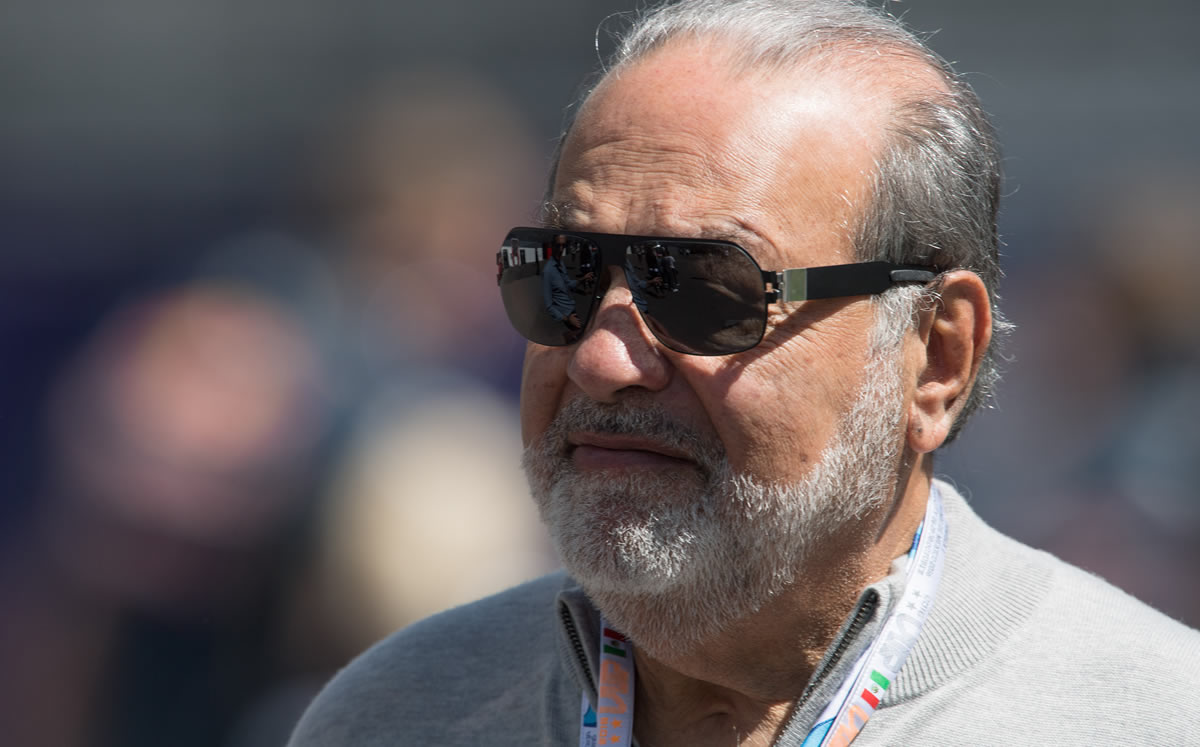

A deepfake video using AI to manipulate the image and voice of businessman Carlos Slim circulated on social media, falsely promoting a fraudulent investment app. The Mexican financial authority Condusef warned the public about the scam, highlighting the risks of AI-enabled deception and financial fraud.[AI generated]

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/GVO6KJCWK4KVUXTHNLNVXTR75I.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/elfinanciero/RVOGQXDXKRBUJLDCTHSJO7GR2Y.jpeg)