The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

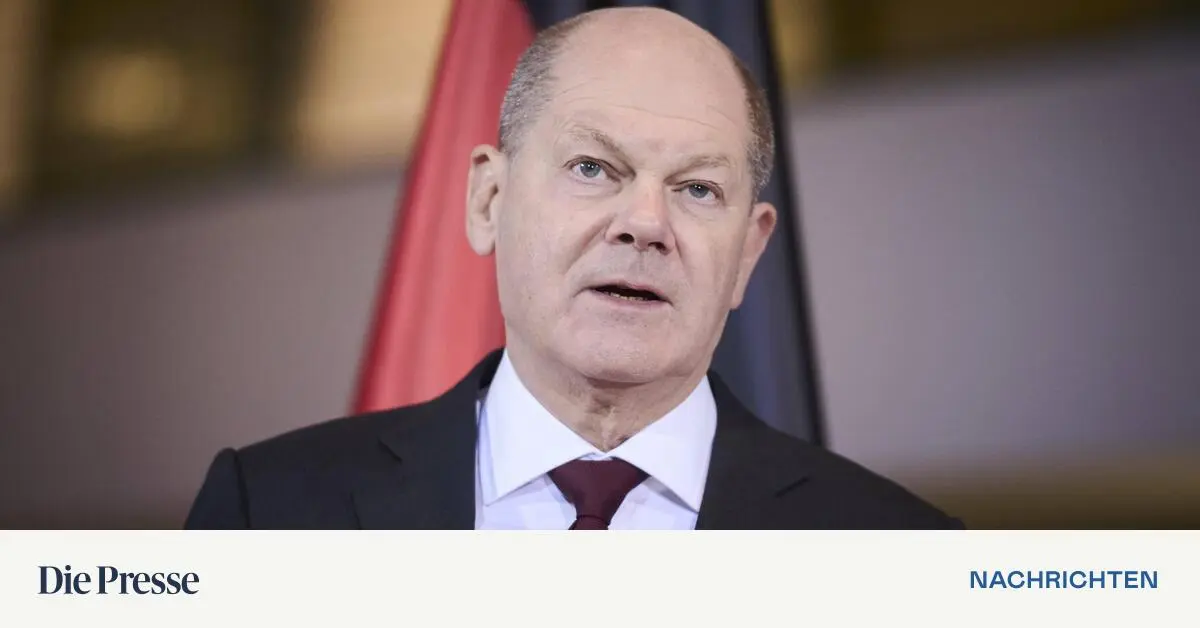

The satirical art group Zentrum für Politische Schönheit used AI to create a deepfake video of Chancellor Olaf Scholz falsely announcing a ban on the AfD party. The realistic video spread online, prompting government concern over manipulation and misinformation, and leading to the formation of a task force to address AI-driven disinformation.[AI generated]