The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

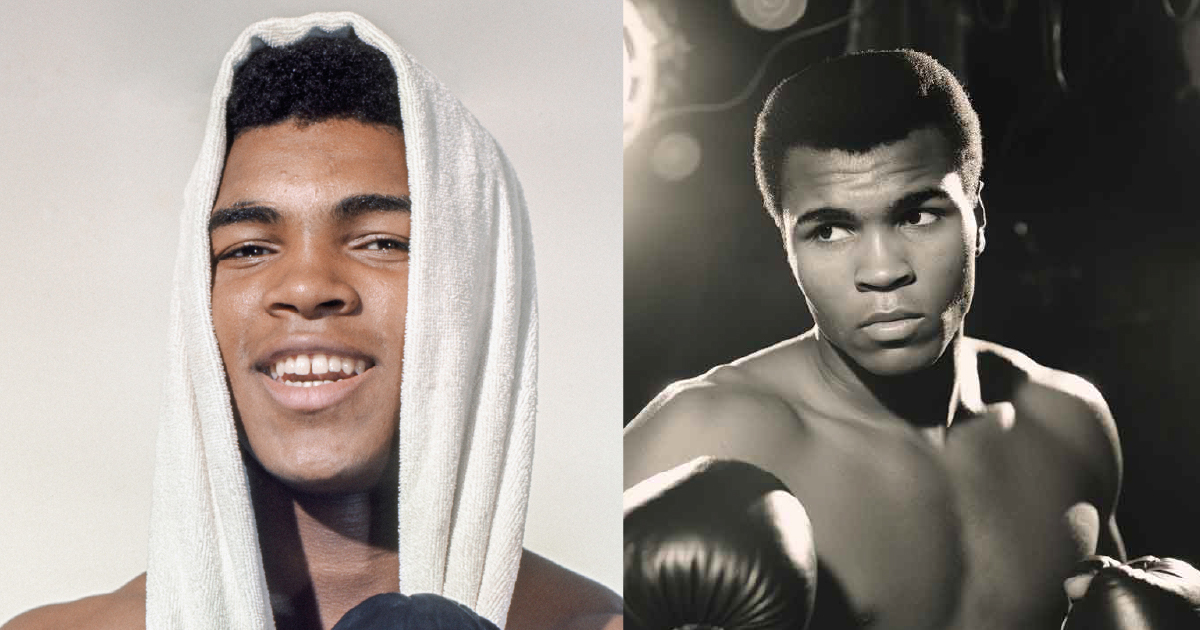

AI-generated images of Hawaiian singer Israel Kamakawiwoʻole, created with tools like Midjourney, have appeared as top results in Google Image Search, misleading users by presenting fake visuals as authentic. Despite Google's promises to label such content, the lack of clear identification has resulted in misinformation and public confusion.[AI generated]