The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

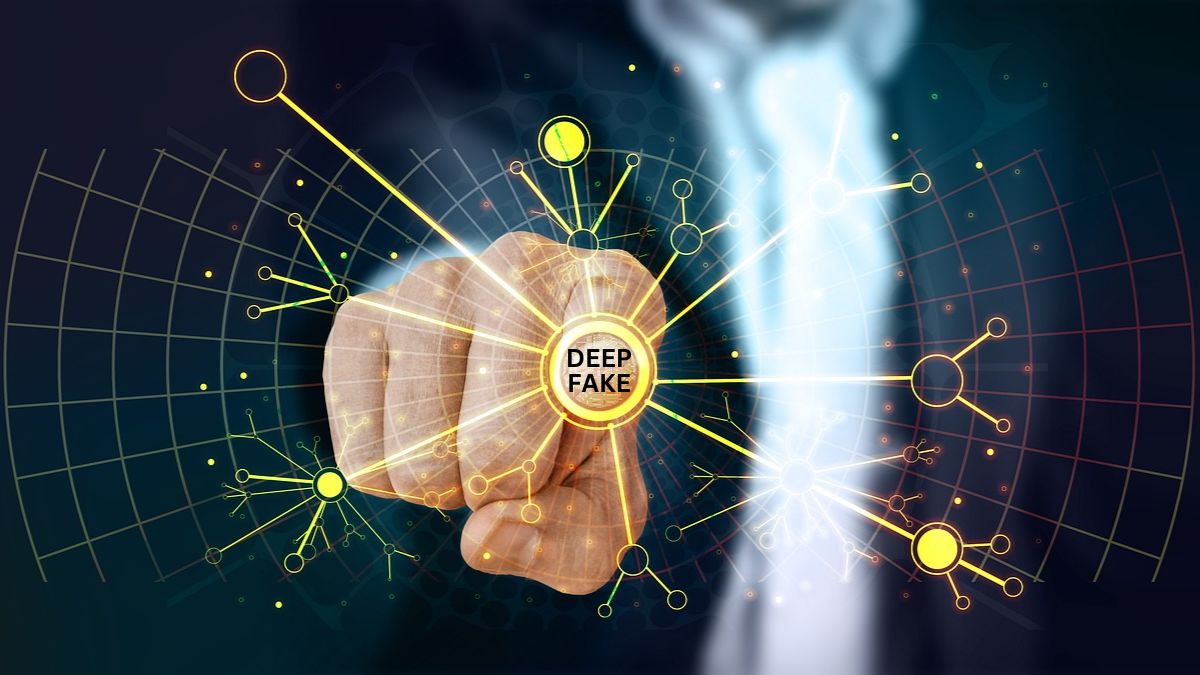

Bollywood actress Alia Bhatt became the latest victim of AI-generated deepfake videos, with her face superimposed onto another person in an obscene viral clip. The incident, part of a wider trend affecting several celebrities, has sparked public concern and calls for stricter action against AI-driven identity theft and misinformation.[AI generated]

1701056319922.jpg)

1701138585884.jpg)