The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

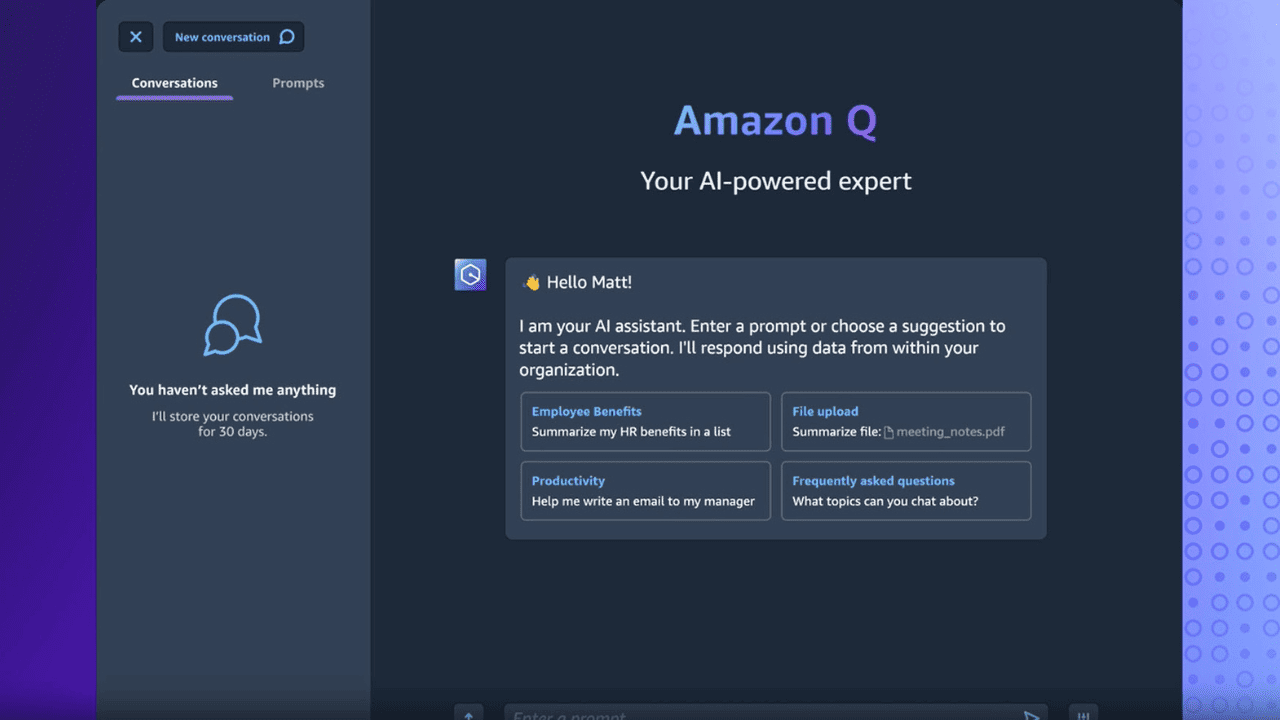

Amazon's AI chatbot Q experienced severe hallucinations, leading to the leakage of confidential information such as AWS data center locations and internal programs. Employees flagged the incident as critical, prompting urgent engineering response. Despite Amazon's downplaying, the malfunction raised significant privacy and security concerns.[AI generated]