The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

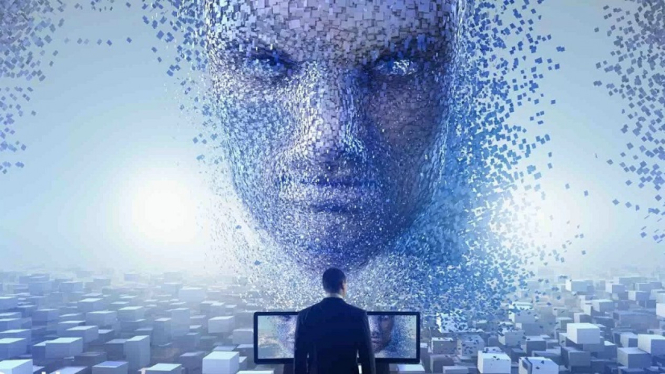

A deepfake video using AI technology falsely depicted President Joko Widodo delivering a speech in Mandarin, misleading the public and causing misinformation. Indonesian officials confirmed the video was manipulated and warned about the broader risks of deepfake AI in spreading disinformation and undermining trust in information sources.[AI generated]

:strip_icc():format(jpeg)/kly-media-production/medias/2973291/original/037286600_1574301260-02_cek_fakta_teknologi2.jpg)