The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

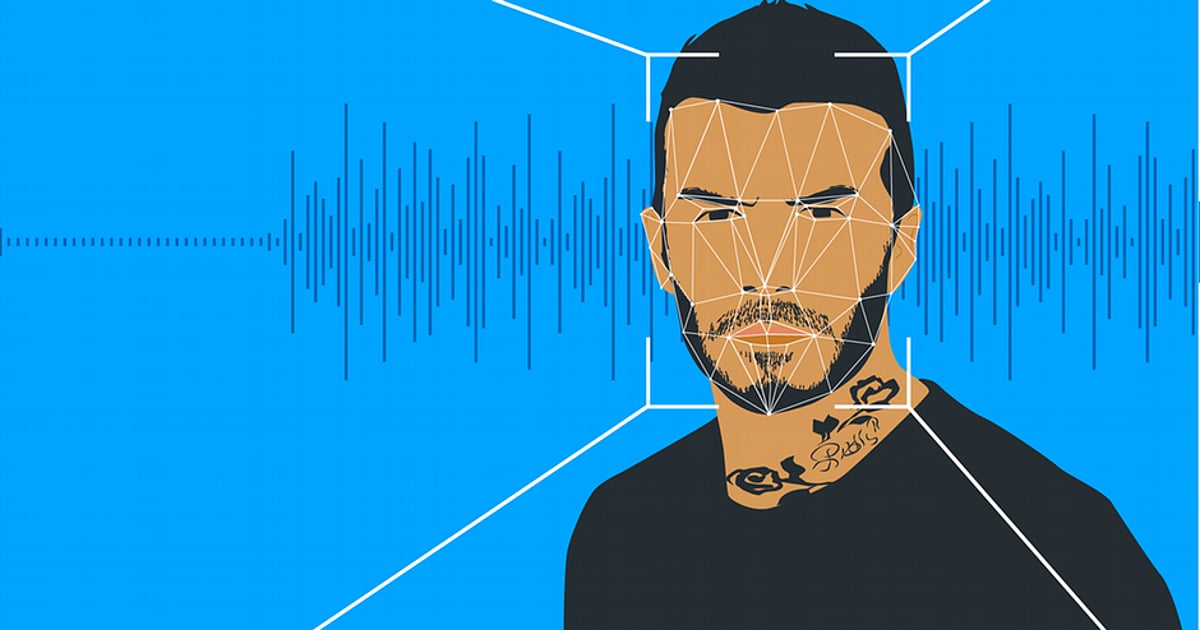

Infosys co-founder Narayana Murthy has warned the public about AI-generated deepfake videos and images falsely claiming his endorsement of automated trading apps. These deepfakes, spread via social media and fraudulent websites, have caused reputational harm and risk misleading people into financial scams. Murthy urges vigilance and reporting of such incidents.[AI generated]