The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

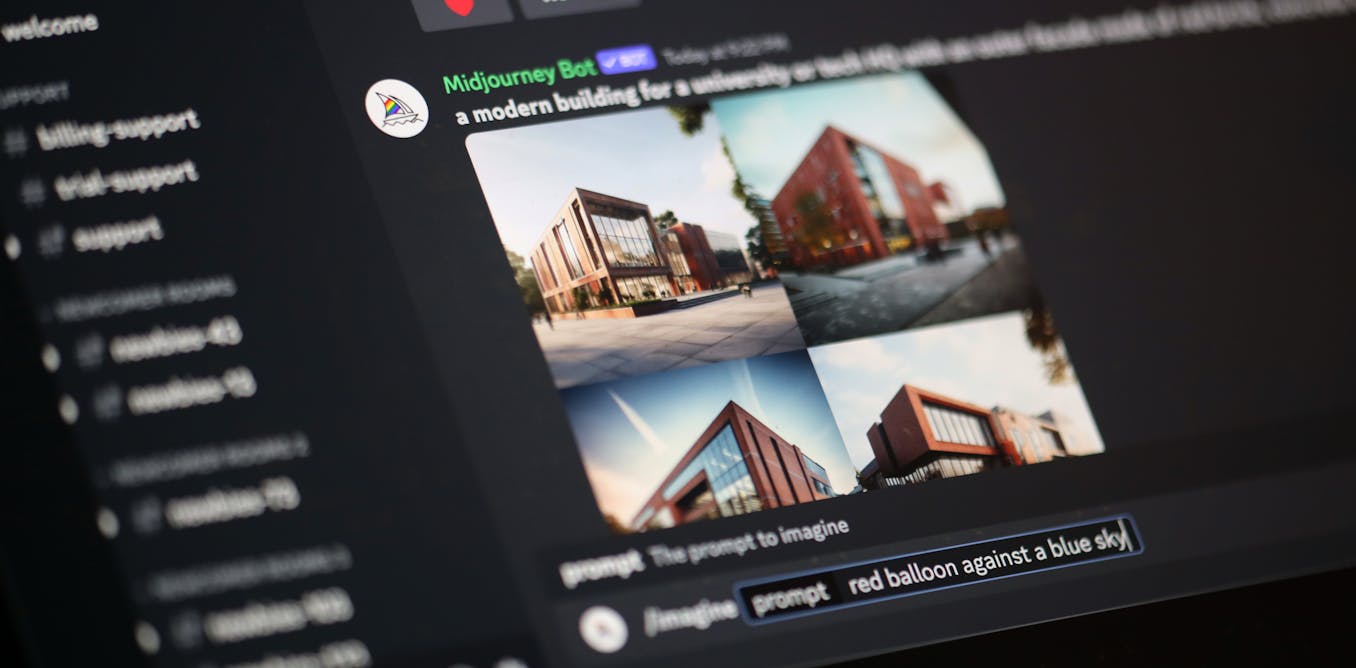

Artists are intentionally altering images with tools like Nightshade to 'poison' datasets used by AI image generators such as Midjourney and DALL-E. This sabotage causes the AI to produce incorrect or nonsensical outputs, degrading reliability and utility as a form of protest against unauthorized use of their work.[AI generated]