The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

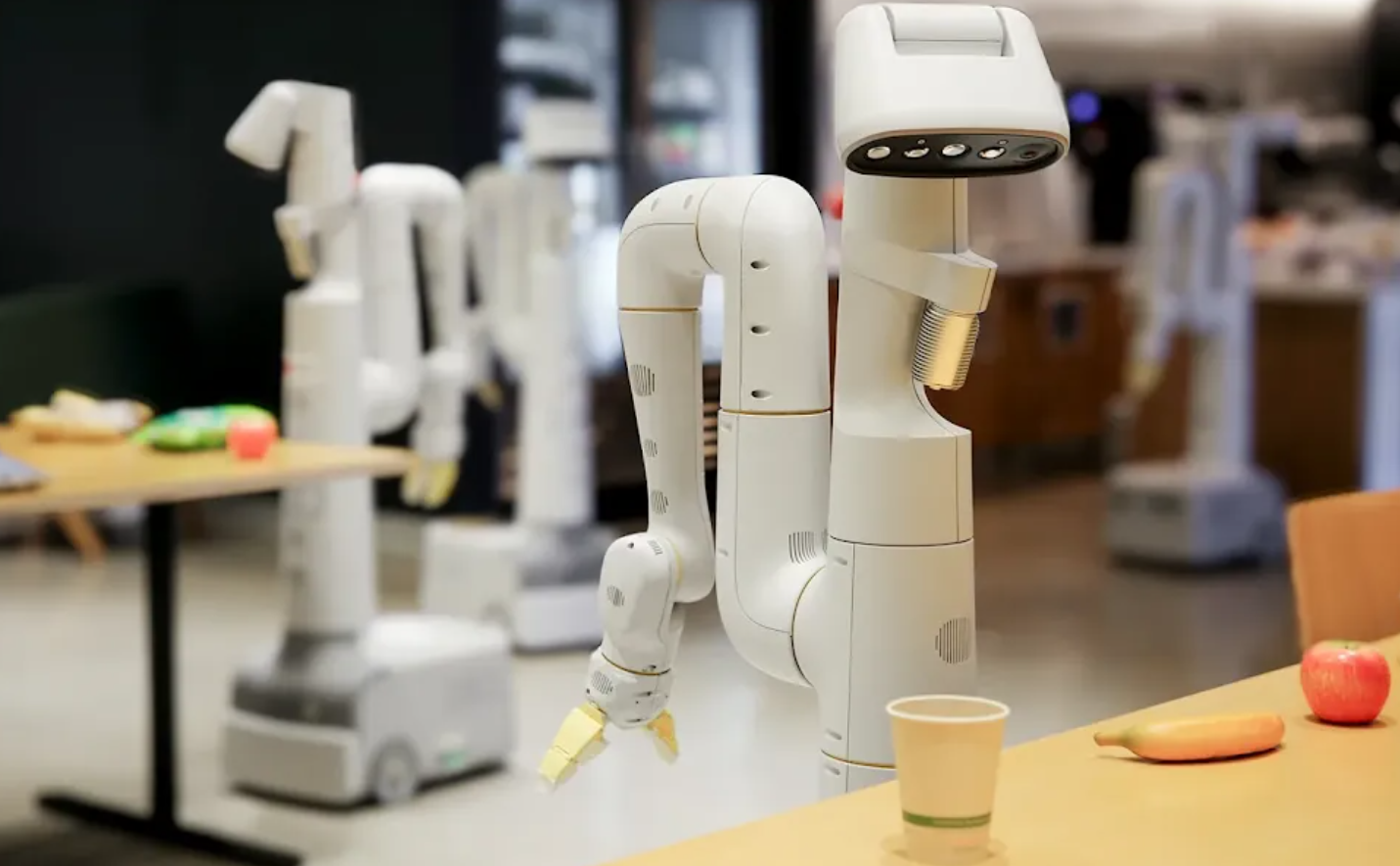

India has approved AI-powered autonomous land robots for border patrol, aiming to reduce soldier casualties. Google DeepMind revealed three robotics advances and Asimov-inspired safety rules to prevent harm. China’s Communist Party plans mass-produced humanoid robots with AI brains for manufacturing and potential military use, raising security and ethical concerns.[AI generated]