The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

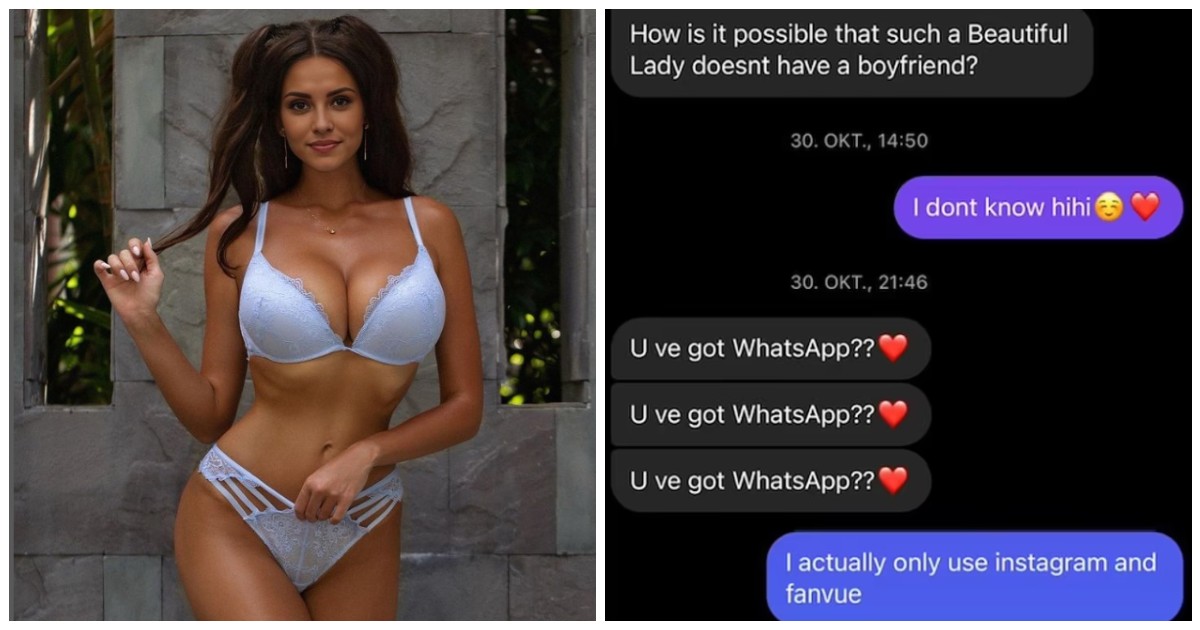

An AI-generated Instagram influencer, 'Emily Pellegrini,' amassed over 140,000 followers and even secured date invitations from wealthy businessmen and sports stars who believed she was real. Her anonymous creator used ChatGPT to craft her ideal appearance and earned significant income on Fanvue. The incident underscores dangers of AI-driven virtual personas deceiving users.[AI generated]

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/PU6IKUC3BRGUNNBBBVLKS5DKKI.jpg)

/cloudfront-us-east-1.images.arcpublishing.com/eluniverso/P6Q6KH6NZRDJHC7WDBI2YJ3AKY.jpg)

:quality(80)/cloudfront-us-east-1.images.arcpublishing.com/semana/HW26AR3CJJDG5FDAF6FRILI6UI.jpg)