The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

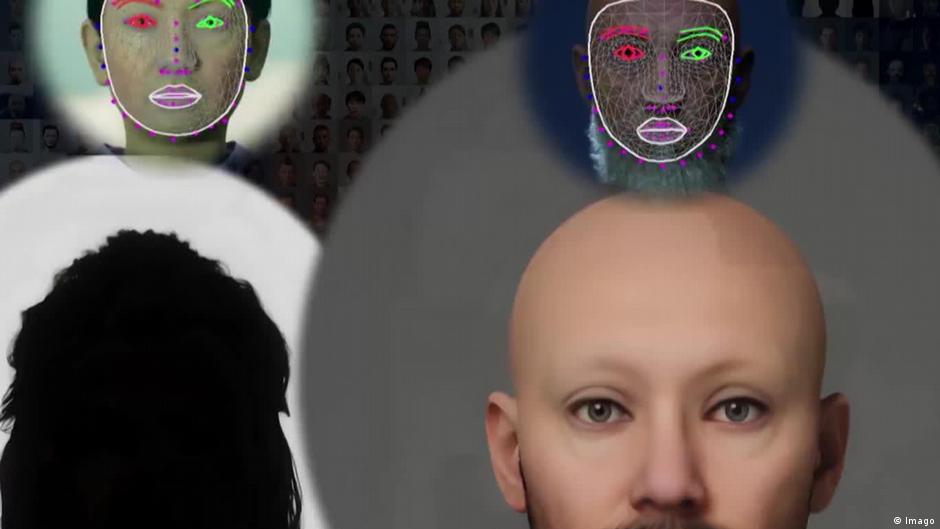

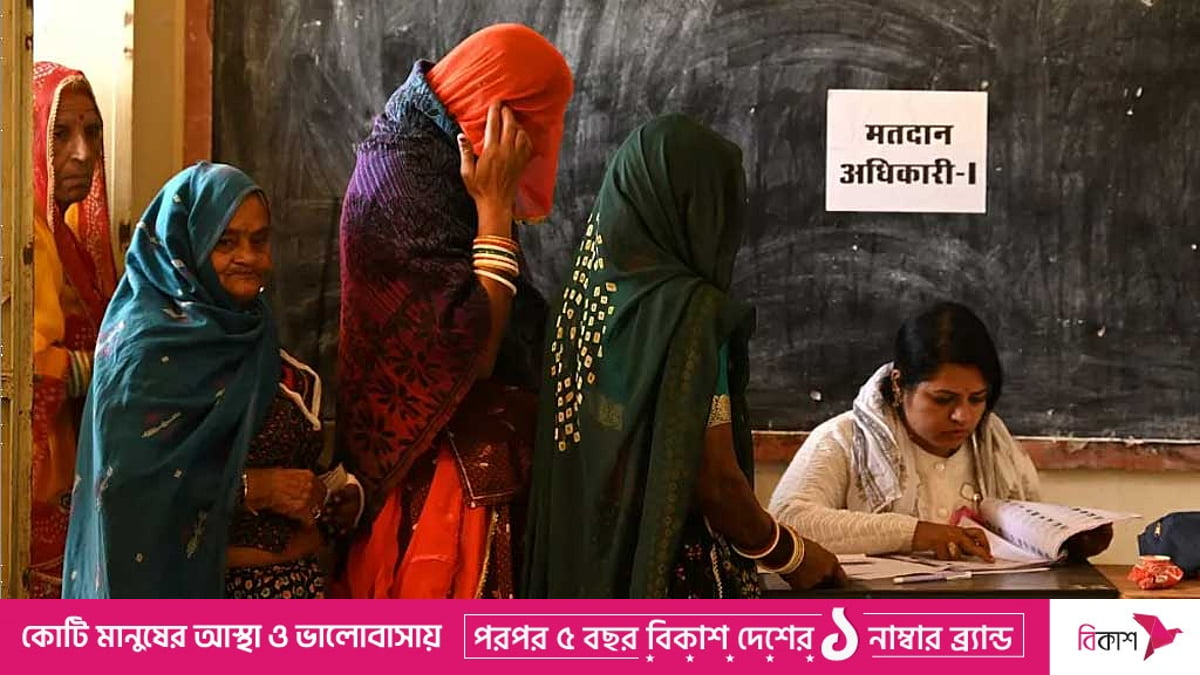

AI-generated deepfake videos and voice clones have been used worldwide to spread scams and election misinformation, targeting figures from Singapore’s PM Lee and Indonesia’s leaders to India’s campaign rivals. Experts warn these convincing fakes exploit advances in AI, influence voter behavior, and highlight insufficient platform and government safeguards.[AI generated]

:strip_icc():format(jpeg)/kly-media-production/medias/4594965/original/086920300_1696219240-laptop-with-fake-news-magnifying-glass.jpg)