The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

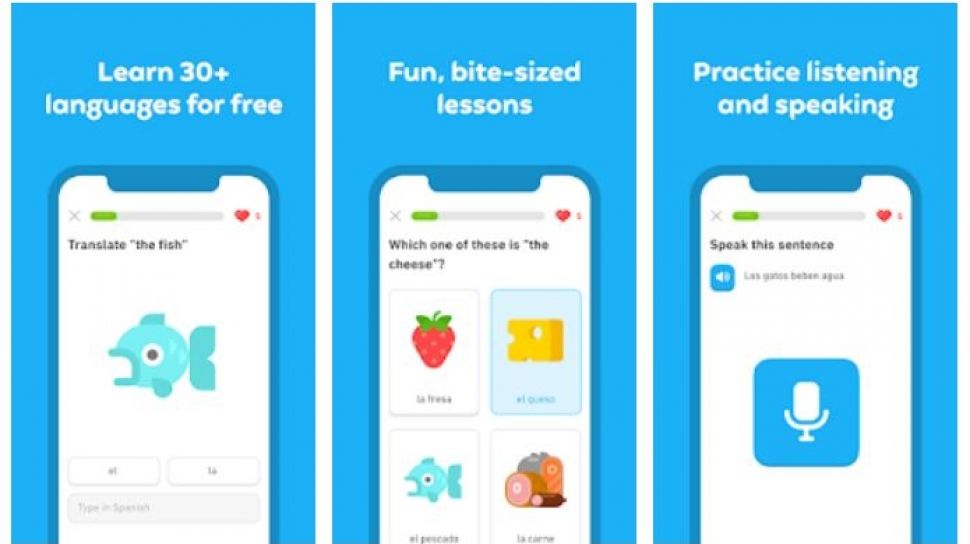

Duolingo cut around 10% of its US-based contractors, replacing them with AI-driven content generation. Humans will still review AI outputs for accuracy, though former contractors report errors in lessons since the shift. Duolingo aims to optimize costs, reflecting a broader trend of AI displacing human labor.[AI generated]

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/elfinanciero/ER4B26EEHFCFJBVTKSFO3IPVSM.jpg)

:quality(80):focal(273x146:283x156)/cloudfront-us-east-1.images.arcpublishing.com/estadao/DJOCE4BZXRC3JNUTSOR427ZIQA.png)

/data/photo/2023/06/29/649d2bce646bd.jpg)