The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

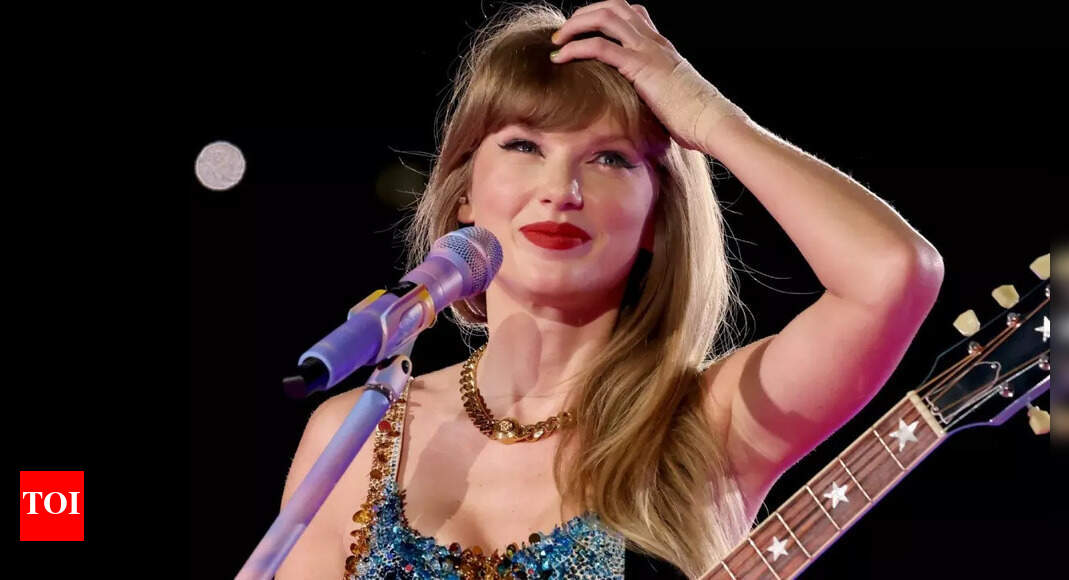

Scammers used AI deepfake technology to create videos and voices of Taylor Swift endorsing fake Le Creuset cookware giveaways on social media. Fans paid a “shipping fee” and were later hit with hidden monthly charges without receiving any products, resulting in financial losses. Platforms include Facebook and TikTok.[AI generated]