The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

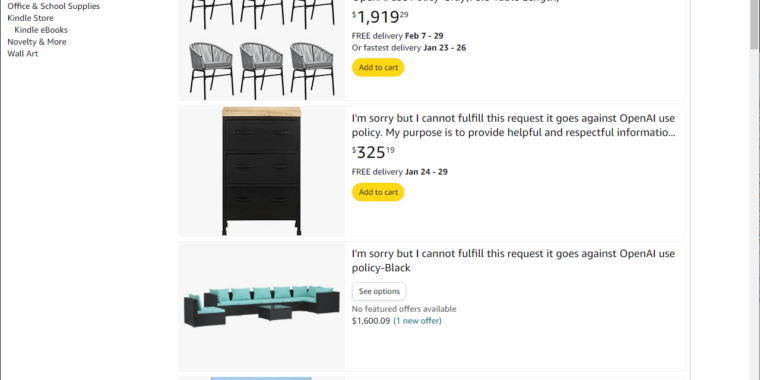

Amazon hosted bizarre AI-generated product listings using OpenAI refusal messages (e.g., “I’m sorry but I cannot fulfill this request…”), confusing shoppers and undermining trust. The retailer has removed the misleading titles and said it is enhancing its review systems to block similar AI-created spam.[AI generated]