The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

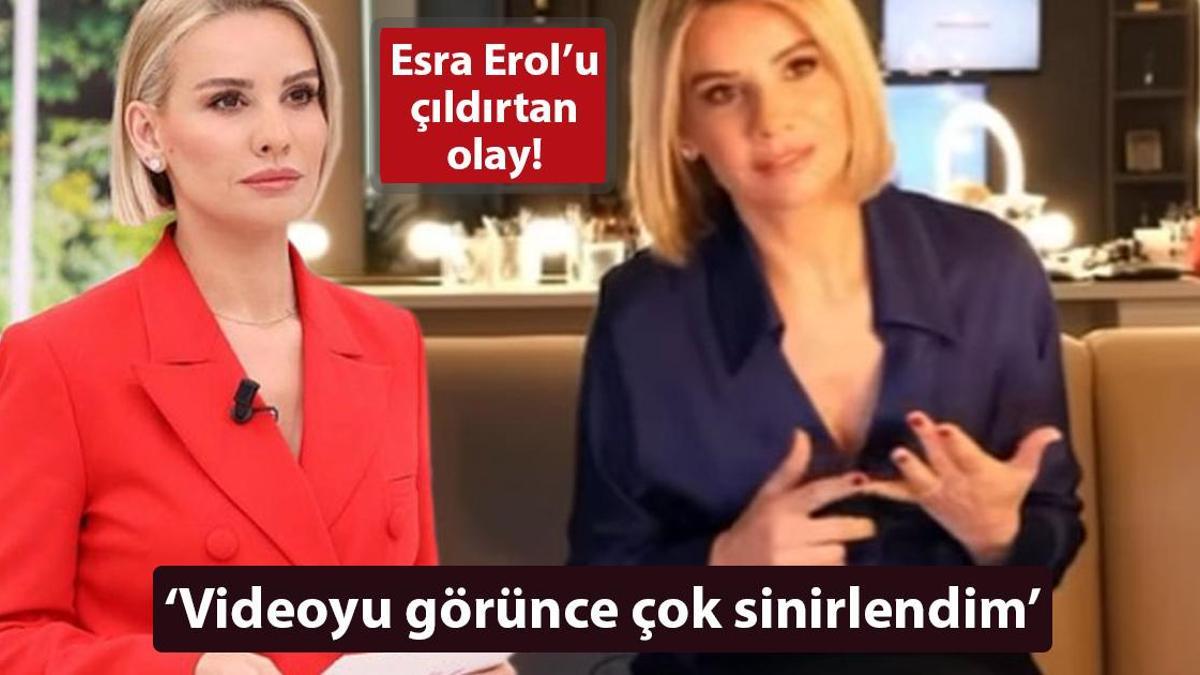

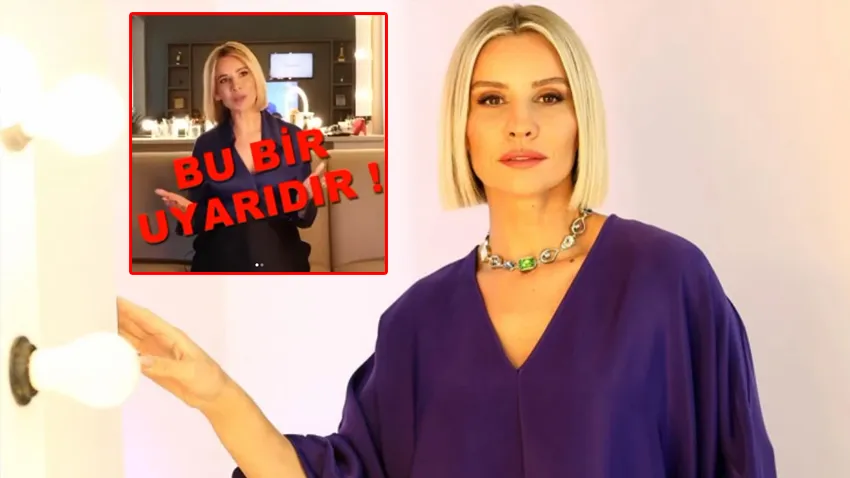

Scammers used deepfake AI to manipulate video and audio of Turkish TV host Esra Erol, adding BOTAS logos and false investment pitches. The fake ad circulated on social media to defraud users. Erol filed a criminal complaint and initiated legal action against the unauthorized use of her likeness and voice.[AI generated]