The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

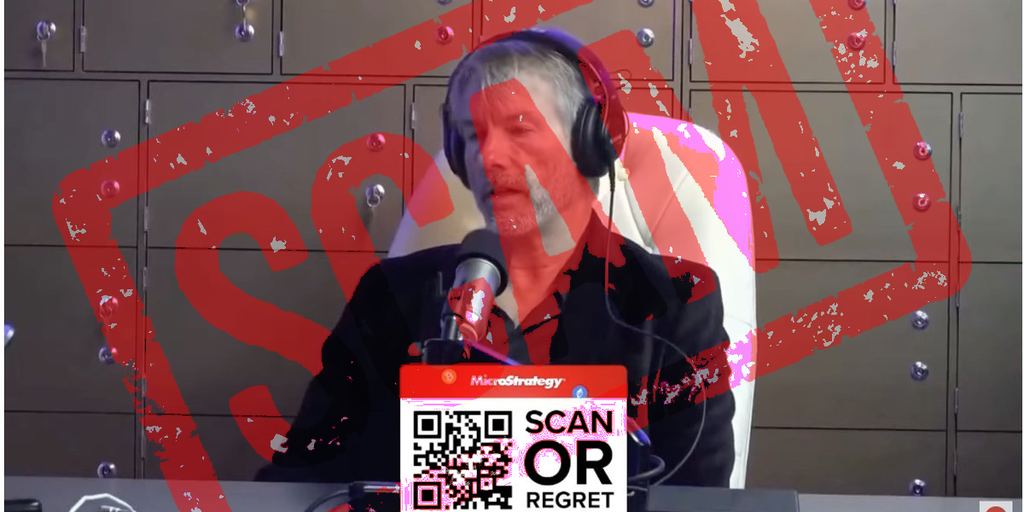

MicroStrategy chairman Michael Saylor warned that scammers are using AI-generated deepfake videos of him promising to double Bitcoin investments. These deepfakes prompt viewers to scan a QR code and send BTC to criminals. His team removes about 80 fake videos daily and urges users to verify claims before sending funds.[AI generated]