The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

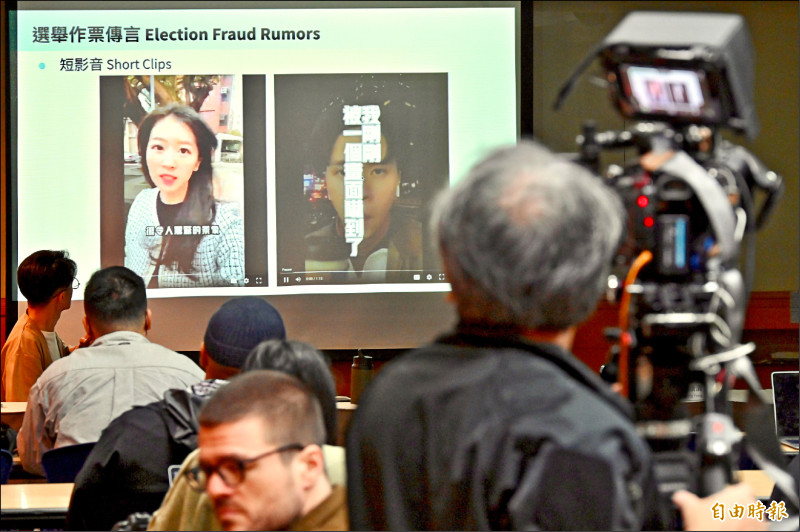

Beijing’s “China Consular” app compels overseas nationals to upload personal and travel details under the guise of consular services, enabling cross‐border monitoring and intimidation. Concurrently, China‐linked actors leveraged generative AI and fake accounts to spread tailored disinformation in Taiwan’s presidential poll, deepening divisions and eroding trust.[AI generated]