The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

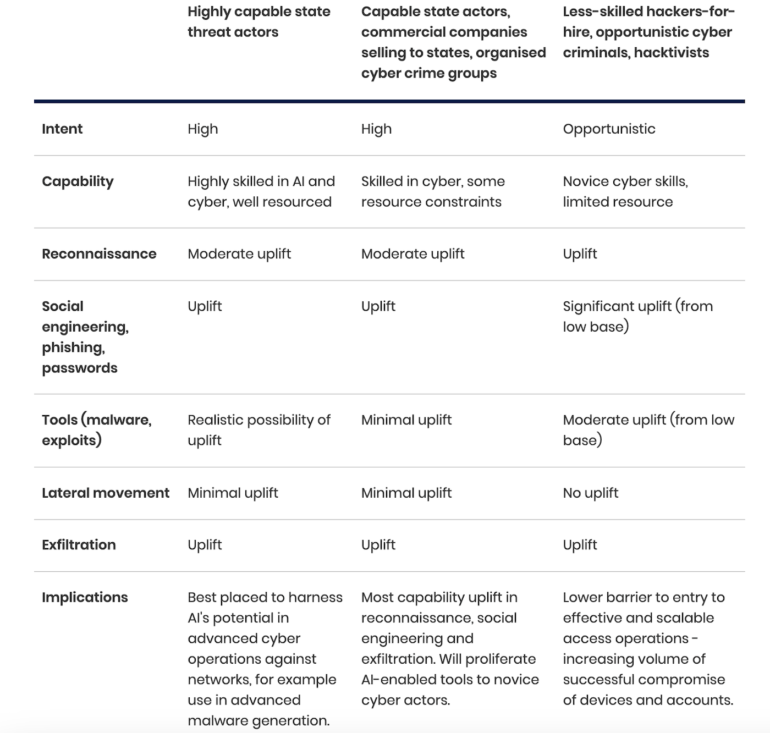

The UK's National Cyber Security Centre warns that AI is already being used to automate phishing and ransomware, lowering skill requirements for hackers-for-hire and hacktivists. This AI-driven boost in attack sophistication and targeting is fueling a fresh wave of cyber assaults on UK companies and critical infrastructure.[AI generated]