The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

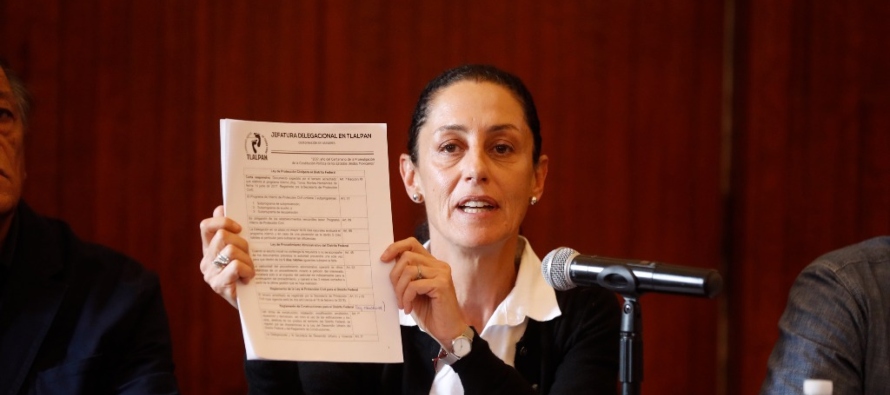

Presidential candidate Claudia Sheinbaum has denounced an AI-generated deepfake video using her likeness and voice to solicit 4,000 peso investments with false promises of significant returns. The manipulated content is widely circulating on social media and messaging apps, prompting her to report the fraud and warn the public against the scheme.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions the use of AI to create a fake video impersonating Claudia Sheinbaum, which is being used to solicit money fraudulently. This constitutes a direct harm to people (financial fraud) and harm to communities (misinformation and deception). The AI system's role is pivotal as it enables the creation of realistic fake content used for fraudulent purposes. Therefore, this qualifies as an AI Incident under the framework, as the AI system's use has directly led to harm.[AI generated]

:quality(70):focal(572x344:582x354)/cloudfront-us-east-1.images.arcpublishing.com/sdpnoticias/5ENYJJTOZBDKPHFHQQ7SISV5UA.png)