The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

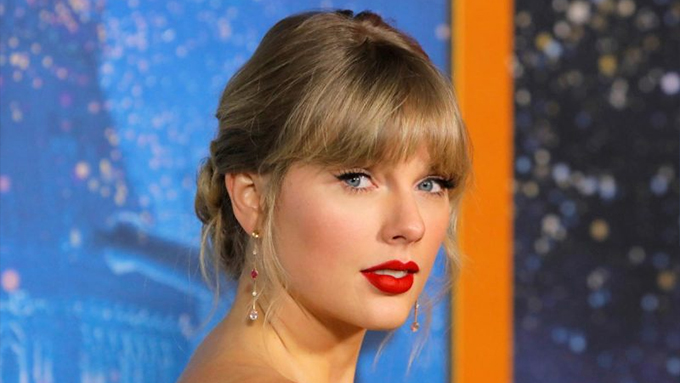

A surge of AI-generated non-consensual pornographic deepfakes of Taylor Swift spread on X, Reddit, Meta and Telegram. X blocked searches and removed posts, while the White House urges Congress to pass a bill. Senators led by Dick Durbin introduced the DEFIANCE Act, allowing victims to sue creators.[AI generated]