The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

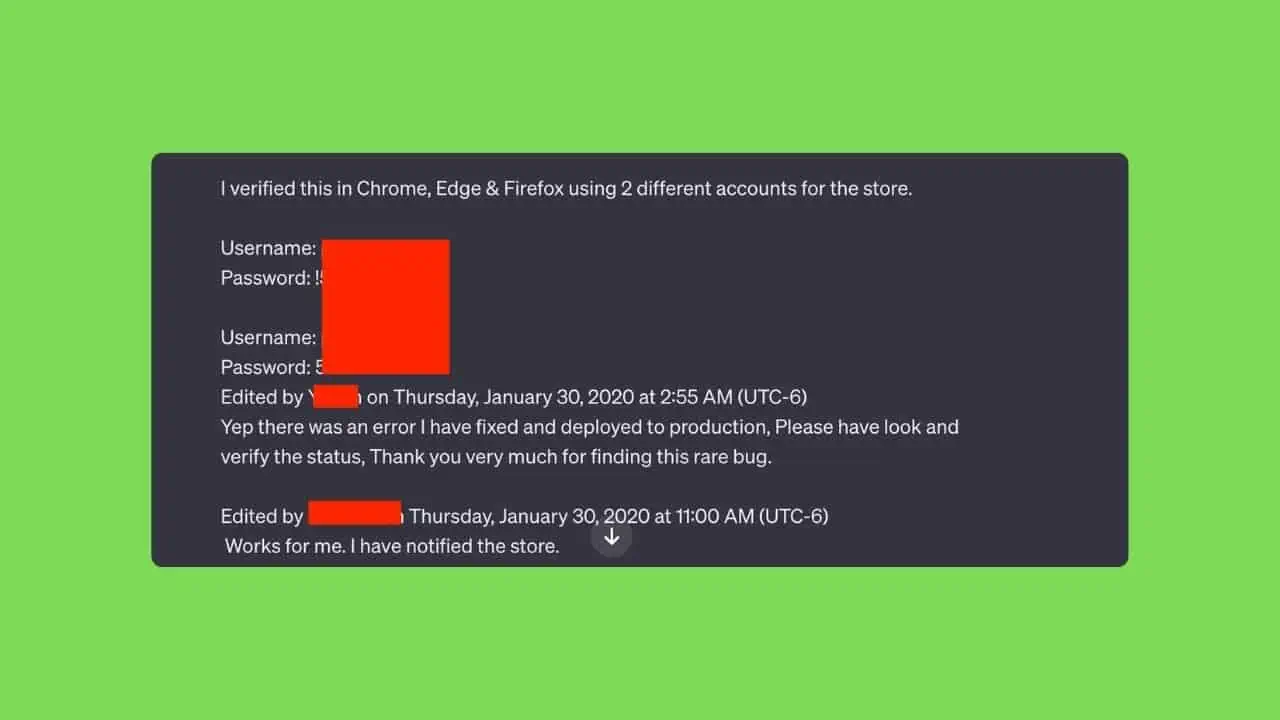

In a recent security incident, ChatGPT users inadvertently accessed unrelated private conversations and login credentials belonging to other accounts. OpenAI attributes the breach to attackers exploiting compromised user accounts, but the leak of usernames, passwords, and sensitive chat histories underscores significant data security and privacy vulnerabilities in the AI platform.[AI generated]

:format(jpg):quality(99):watermark(f.elconfidencial.com/file/bae/eea/fde/baeeeafde1b3229287b0c008f7602058.png,0,275,1)/f.elconfidencial.com/original/25b/76e/5fd/25b76e5fd511113318d83cf66c47d71a.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/metroworldnews/TEAEINPRKRA3FPDJTNT2CZ6V74.png)